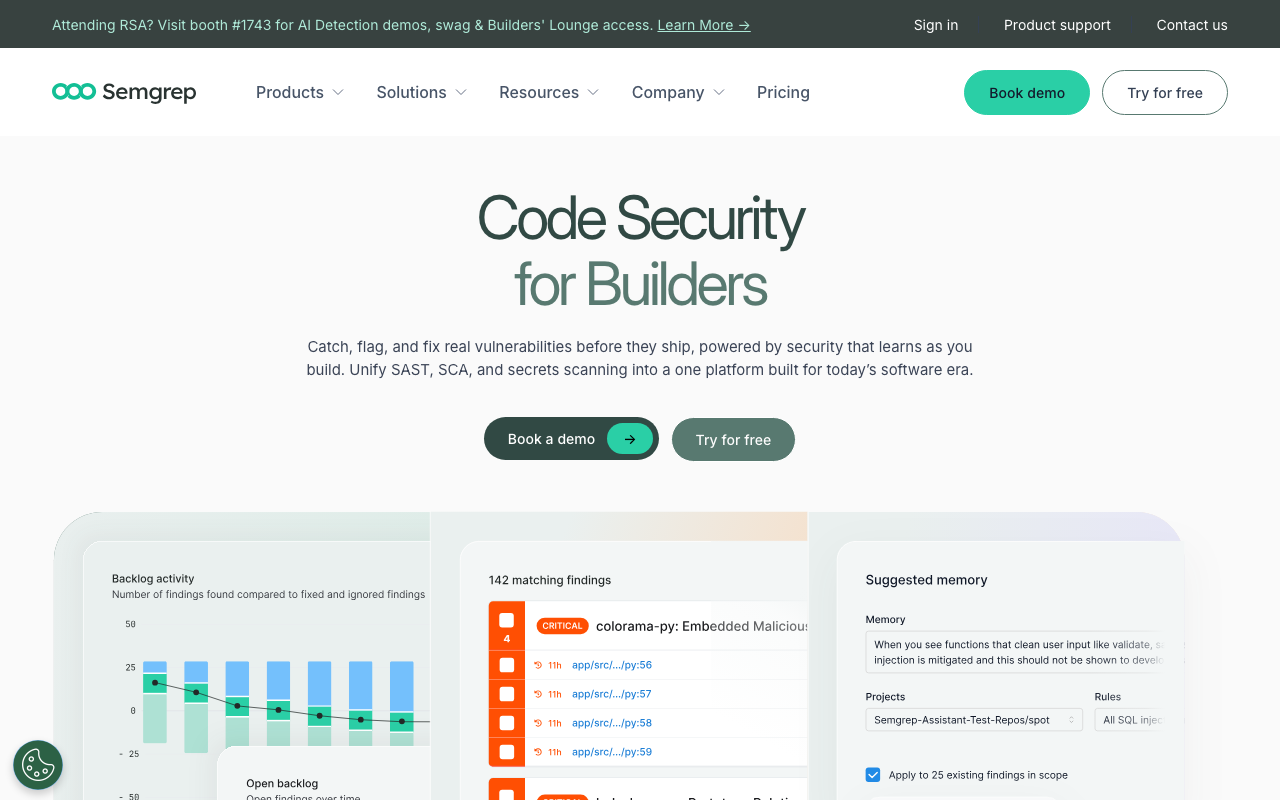

Getting Started with Semgrep CLI: Installation and First Scan

Semgrep CLI tutorial covering installation via pip, brew, and Docker, first scan, rulesets, output formats, ignoring findings, and CI basics.

Published:

Why learn the Semgrep CLI

Semgrep CLI is a fast, open-source command-line tool for static analysis that finds bugs, security vulnerabilities, and anti-patterns in your code. Unlike heavyweight SAST tools that require complex server installations and proprietary configurations, Semgrep runs directly in your terminal, finishes most scans in seconds, and uses pattern syntax that mirrors the source code you are already writing. It supports over 30 programming languages and ships with thousands of pre-written rules maintained by the security community.

Whether you are a solo developer looking to catch SQL injection before it ships or a team lead evaluating static analysis tools for your CI pipeline, the Semgrep CLI is the starting point. Every feature of the broader Semgrep platform - cloud dashboards, PR comments, AI-powered triage - builds on top of this command-line foundation. Learning the CLI first gives you the knowledge to configure, debug, and optimize Semgrep in any environment.

This semgrep cli tutorial walks through everything from installation to running your first scan, choosing rulesets, working with output formats, ignoring findings, targeting specific files, integrating with CI, and tuning performance. By the end, you will have a working Semgrep setup that you can use locally and extend into your automated pipelines.

Installing Semgrep CLI

Semgrep provides three official installation methods. Choose the one that matches your environment and workflow.

Install with pip (recommended for all platforms)

The pip installation is the most common method and works on macOS, Linux, and Windows via WSL. You need Python 3.8 or later.

# Install Semgrep globally

pip install semgrep

# Verify the installation

semgrep --versionIf you prefer to keep Semgrep isolated from your system Python packages, use pipx instead:

# Install with pipx for an isolated environment

pipx install semgrep

# Verify

semgrep --versionThe pipx approach is particularly useful on machines where you manage multiple Python projects and want to avoid dependency conflicts. Semgrep bundles its own binary components alongside the Python package, so isolation prevents any interference with other tools.

Install with Homebrew (macOS)

On macOS, Homebrew provides a clean one-command installation:

# Install via Homebrew

brew install semgrep

# Verify

semgrep --versionHomebrew handles all dependencies automatically and makes upgrades straightforward with brew upgrade semgrep. This method is preferred by macOS users who already rely on Homebrew for their development tooling.

Install with Docker (any platform)

Docker is the best option for CI environments, shared build servers, or situations where you do not want to install anything on the host system:

# Pull the official Semgrep image

docker pull semgrep/semgrep

# Run a scan with Docker

docker run --rm -v "${PWD}:/src" semgrep/semgrep semgrep --config auto /srcThe -v "${PWD}:/src" flag mounts your current working directory into the container at /src. This means Semgrep inside the container can read your source files without any of its dependencies touching your host system. The --rm flag removes the container after the scan finishes.

For repeated use, you can create a shell alias:

alias semgrep='docker run --rm -v "${PWD}:/src" semgrep/semgrep semgrep'With this alias in place, you can run semgrep --config auto /src as if Semgrep were installed locally.

Verifying your installation

Regardless of which method you chose, confirm that Semgrep is working:

semgrep --version

# Expected output: semgrep 1.x.xIf you see a version number, the installation was successful. If you get a “command not found” error after a pip installation, your Python scripts directory is likely not in your PATH. On Linux, add ~/.local/bin to your PATH. On macOS, the path is typically ~/Library/Python/3.x/bin. You can fix this permanently by adding the following line to your shell profile:

export PATH="$HOME/.local/bin:$PATH"For a more detailed walkthrough of the full Semgrep setup process including cloud configuration, see the guide on how to setup Semgrep.

Running your first scan

With Semgrep installed, you can scan any project immediately - no configuration files, no accounts, and no rule definitions required.

The auto config scan

Navigate to any project directory and run:

semgrep --config autoThe --config auto flag tells Semgrep to inspect your project, detect which languages and frameworks are present, and automatically download the relevant rules from the Semgrep Registry. It then runs those rules against your code and prints any findings to the terminal. This is the fastest way to see what Semgrep can do.

A typical first scan on a medium-sized project takes between 5 and 30 seconds. Semgrep does not need to compile your code or resolve dependencies - it performs pattern matching directly on source files, which is why it runs so quickly compared to traditional SAST tools.

Understanding the output

When Semgrep finds an issue, the output looks like this:

src/api/users.py

python.lang.security.audit.dangerous-system-call

Detected subprocess call with shell=True. This can lead to

command injection vulnerabilities.

22│ subprocess.call(cmd, shell=True)Each finding includes four pieces of information: the file path where the issue was found, the rule ID that triggered the match, a human-readable message explaining the problem, and the exact line of code that matched. The rule ID is important because you will use it later to configure suppressions and to look up rule documentation in the Semgrep Registry.

Scanning with a specific rule set

For more control over what Semgrep checks, specify a rule set by name instead of using auto:

# High-confidence security and correctness rules

semgrep --config p/default

# Broader security coverage with more findings

semgrep --config p/security-audit

# OWASP Top 10 vulnerability categories

semgrep --config p/owasp-top-ten

# Language-specific rules

semgrep --config p/python

semgrep --config p/javascript

semgrep --config p/golangThe p/default rule set is the best starting point for most teams. It contains rules curated by the Semgrep team for high confidence and low false positive rates. Once you are comfortable reviewing findings from p/default, you can layer on additional sets like p/security-audit for broader coverage.

For a deeper explanation of how to build and manage your own rule sets, see the guide on Semgrep custom rules.

Choosing the right rulesets

Rulesets determine what Semgrep looks for in your code. Choosing the right combination affects both the quality of findings and the amount of noise your team needs to triage.

Core rulesets

p/default contains approximately 600 high-confidence rules covering security vulnerabilities and correctness issues. These rules are maintained by the Semgrep team and have been tuned for low false positive rates across a wide range of codebases. This is the right starting point for every team.

p/security-audit is a broader collection that trades precision for coverage. It catches more potential issues but produces more findings that require manual review. Use this when you want comprehensive security scanning and have the bandwidth to triage additional results.

p/owasp-top-ten maps rules directly to the OWASP Top 10 categories - injection, broken access control, cryptographic failures, and so on. This set is valuable for compliance-driven teams that need to demonstrate OWASP coverage in audits or security reviews.

Language and framework rulesets

Semgrep provides curated rulesets for specific languages:

| Ruleset | Coverage |

|---|---|

| p/python | Python security, Django, Flask patterns |

| p/javascript | JavaScript and Node.js security |

| p/typescript | TypeScript-specific patterns |

| p/golang | Go security and error handling |

| p/java | Java security and Spring patterns |

| p/ruby | Ruby and Rails security |

| p/csharp | C# security patterns |

| p/php | PHP security patterns |

Infrastructure rulesets

For infrastructure-as-code files, Semgrep offers dedicated rulesets:

| Ruleset | Coverage |

|---|---|

| p/terraform | Terraform misconfigurations |

| p/dockerfile | Dockerfile security best practices |

| p/docker-compose | Docker Compose issues |

| p/kubernetes | Kubernetes YAML security |

Combining multiple rulesets

You can stack multiple rulesets in a single scan by passing multiple --config flags:

semgrep --config p/default --config p/security-audit --config p/pythonStart with p/default alone, review those findings, and then add additional sets one at a time. Adding too many rulesets at once generates an overwhelming volume of findings and makes it difficult to prioritize what to fix first.

Output formats - JSON, SARIF, and more

Semgrep’s default output is human-readable terminal text, but most real-world workflows require structured output for integration with other tools, dashboards, or compliance systems.

JSON output

JSON is the most versatile format for programmatic processing:

semgrep --config p/default --json > results.jsonThe JSON output contains an array of findings, each with the rule ID, file path, line and column numbers, matched code snippet, severity, and metadata including CWE identifiers and OWASP categories. You can pipe this output into jq, Python scripts, or any tool that consumes JSON.

# Count findings by severity

semgrep --config p/default --json | jq '[.results[] | .extra.severity] | group_by(.) | map({(.[0]): length}) | add'SARIF output

SARIF (Static Analysis Results Interchange Format) is the industry standard for static analysis results. GitHub Code Scanning, Azure DevOps, and many security platforms consume SARIF natively:

semgrep --config p/default --sarif > results.sarifSARIF output is particularly useful for Semgrep GitHub Action workflows where you want findings to appear in the GitHub Security tab:

# Generate SARIF and upload to GitHub Code Scanning

semgrep --config p/default --sarif --output results.sarifJUnit XML output

For CI systems that expect JUnit-style test results:

semgrep --config p/default --junit-xml > results.xmlThis format allows Semgrep findings to appear as “test failures” in CI dashboards that support JUnit reporting, such as Jenkins, CircleCI, and GitLab CI.

Writing output to a file

Use the --output flag to write results to a file while keeping the terminal output clean:

# Write JSON to a file

semgrep --config p/default --json --output results.json

# Write SARIF to a file

semgrep --config p/default --sarif --output results.sarifThis is useful when you need both human-readable feedback during development and machine-readable output for downstream processing.

Ignoring findings

Not every finding requires a code change. Test files, generated code, and known-safe patterns all produce findings that you need a way to suppress without losing track of real issues.

Inline nosemgrep comments

The most granular suppression method is the nosemgrep comment, placed on the line immediately before the flagged code:

# nosemgrep: python.lang.security.audit.dangerous-system-call

subprocess.call(safe_internal_command, shell=True)In JavaScript or TypeScript:

// nosemgrep: javascript.express.security.audit.xss.mustache-escape

res.send(trustedHtmlContent);In Go:

// nosemgrep: go.lang.security.audit.dangerous-exec-command

exec.Command("safe-binary", args...)Always include the specific rule ID in the nosemgrep comment. A bare # nosemgrep without a rule ID suppresses all Semgrep rules on that line, which could hide future findings from new rules that you actually want to see.

The .semgrepignore file

For broader exclusions, create a .semgrepignore file in your repository root. The syntax follows .gitignore conventions:

# Test files

tests/

test/

*_test.go

*_test.py

*.test.js

*.test.ts

*.spec.js

*.spec.ts

# Generated code

generated/

__generated__/

*.generated.ts

# Vendored dependencies

vendor/

node_modules/

third_party/

# Build artifacts

dist/

build/

.next/

# Large files that slow down scanning

*.min.js

*.bundle.js

package-lock.jsonThe .semgrepignore file is the right place for paths that should never be scanned - code you do not own, code you cannot change, and code where findings are not actionable.

Excluding specific rules

If a particular rule from a registry set consistently produces false positives in your codebase, you can exclude it from the scan entirely:

semgrep --config p/default --exclude-rule "generic.secrets.gitleaks.generic-api-key"This is a better approach than removing an entire ruleset because of one noisy rule. You keep the coverage from all other rules in the set while silencing the specific one that does not work for your project.

Scanning specific files and directories

You do not always need to scan your entire repository. Targeting specific paths speeds up iteration during development and helps you focus on the code that matters most.

Scanning a single file

semgrep --config p/default src/auth/login.pyScanning a directory

semgrep --config p/default src/api/Scanning multiple paths

semgrep --config p/default src/auth/ src/api/ src/middleware/Using include and exclude patterns

The --include and --exclude flags accept glob patterns for fine-grained control:

# Scan only Python files

semgrep --config p/default --include "*.py"

# Scan only JavaScript and TypeScript files

semgrep --config p/default --include "*.js" --include "*.ts"

# Exclude test directories

semgrep --config p/default --exclude "tests/" --exclude "test/"

# Combine include and exclude

semgrep --config p/default --include "*.py" --exclude "tests/"Practical examples

During development, you often want to scan only the files you have changed. Combine Semgrep with git to achieve this:

# Scan only files changed since the last commit

semgrep --config p/default $(git diff --name-only HEAD~1)

# Scan only staged files

semgrep --config p/default $(git diff --cached --name-only)

# Scan only files changed on the current branch

semgrep --config p/default $(git diff --name-only main...HEAD)This approach gives you rapid feedback during development without waiting for a full-repository scan.

CI integration basics

Running Semgrep in your CI pipeline ensures every pull request and every merge is automatically scanned. Here is how to set up the most common integration.

GitHub Actions

Create .github/workflows/semgrep.yml:

name: Semgrep

on:

pull_request: {}

push:

branches:

- main

jobs:

semgrep:

name: Semgrep Scan

runs-on: ubuntu-latest

container:

image: semgrep/semgrep

steps:

- name: Checkout code

uses: actions/checkout@v4

- name: Run Semgrep

run: semgrep scan --config p/default --errorThe --error flag causes Semgrep to exit with a non-zero code when findings are detected, which fails the GitHub Actions check and can block PRs from merging if branch protection is configured.

For teams using Semgrep Cloud, replace the scan command with semgrep ci and add your token:

- name: Run Semgrep

run: semgrep ci

env:

SEMGREP_APP_TOKEN: ${{ secrets.SEMGREP_APP_TOKEN }}The semgrep ci command automatically performs diff-aware scanning on pull requests, uploads results to the Semgrep dashboard, and posts inline PR comments when the Semgrep GitHub App is installed. For a complete walkthrough of this setup, see the guide on Semgrep GitHub Action.

GitLab CI

Add a Semgrep job to your .gitlab-ci.yml:

semgrep:

image: semgrep/semgrep

script:

- semgrep scan --config p/default --error

rules:

- if: $CI_MERGE_REQUEST_IID

- if: $CI_COMMIT_BRANCH == $CI_DEFAULT_BRANCHOther CI platforms

Semgrep works with any CI platform that can run a shell command. The pattern is the same everywhere: install Semgrep (or use the Docker image), run semgrep scan --config p/default --error, and let the exit code determine whether the build passes or fails. Documented integrations exist for Jenkins, CircleCI, Buildkite, and Azure Pipelines.

SARIF upload for GitHub Code Scanning

To see Semgrep findings in the GitHub Security tab alongside CodeQL results:

- name: Run Semgrep

run: semgrep scan --config p/default --sarif --output results.sarif

- name: Upload SARIF

uses: github/codeql-action/upload-sarif@v3

with:

sarif_file: results.sarif

if: always()The if: always() condition ensures results are uploaded even when Semgrep finds issues and returns a non-zero exit code.

Performance tuning

Semgrep is fast out of the box, but large repositories and complex rulesets can push scan times higher than you want for a CI check. Here are the most effective tuning options.

Control parallelism with —jobs

Semgrep runs scans in parallel by default. On machines with limited memory, reducing parallelism prevents out-of-memory errors:

# Use 2 parallel jobs instead of the default

semgrep --config p/default --jobs 2

# Run sequentially (useful for debugging)

semgrep --config p/default --jobs 1Skip large files with —max-target-bytes

Minified JavaScript, bundled files, and lock files can be enormous and slow down scanning without producing useful findings:

# Skip files larger than 500KB

semgrep --config p/default --max-target-bytes 500000Combine this with .semgrepignore entries for *.min.js, *.bundle.js, and package-lock.json to eliminate the most common offenders permanently.

Set per-rule timeouts

Some rules on certain files can take an unusually long time. Set a per-rule timeout to prevent any single rule from stalling the entire scan:

# 30-second timeout per rule per file

semgrep --config p/default --timeout 30If a rule exceeds the timeout on a given file, Semgrep skips that rule-file combination and continues scanning. The skipped match is reported in the scan summary so you know it happened.

Cap memory usage

For CI runners with limited memory, set an upper bound:

# Limit Semgrep to 4GB of memory

semgrep --config p/default --max-memory 4000Diff-aware scanning in CI

The single most impactful performance optimization for CI is diff-aware scanning. Instead of scanning every file in the repository, Semgrep analyzes only the files changed in the current pull request:

# semgrep ci automatically does diff-aware scanning on PRs

semgrep ciWith diff-aware scanning, the median CI scan time drops to approximately 10 seconds regardless of repository size. This is because most pull requests touch only a handful of files, and Semgrep only needs to run rules against those specific changes.

Practical tuning configuration

For a large repository with CI time constraints, a well-tuned command looks like this:

semgrep scan \

--config p/default \

--exclude "vendor/" \

--exclude "node_modules/" \

--exclude "*.min.js" \

--max-target-bytes 500000 \

--timeout 30 \

--max-memory 4000 \

--errorThis configuration runs the high-confidence default rules, skips vendored code and large files, prevents individual rules from hanging, caps memory usage, and fails the build when real findings are detected.

Consider CodeAnt AI as a complementary tool

While Semgrep excels at pattern-based static analysis, CodeAnt AI takes a different approach by combining AI-powered code review with SAST, secret detection, and infrastructure-as-code scanning in a single platform. Starting at $24/user/month for the Basic plan and $40/user/month for Premium, CodeAnt AI provides line-by-line PR feedback, one-click auto-fix suggestions, and support for over 30 languages.

CodeAnt AI is worth evaluating if you want an all-in-one platform that handles both the AI review layer and the deterministic security scanning layer. Many teams run Semgrep CLI for its deep rule customization alongside CodeAnt AI for broader automated review coverage. For a broader comparison of tools in this space, see the roundup of Semgrep alternatives.

Semgrep CLI command reference

Here is a quick reference for the commands and flags covered in this tutorial:

| Command | Purpose |

|---|---|

semgrep --config auto | Auto-detect languages and scan with relevant rules |

semgrep --config p/default | Scan with the curated high-confidence ruleset |

semgrep --config p/default --json | Output findings in JSON format |

semgrep --config p/default --sarif | Output findings in SARIF format |

semgrep --config p/default --error | Exit with non-zero code on findings (for CI) |

semgrep --config p/default --include "*.py" | Scan only Python files |

semgrep --config p/default --exclude "tests/" | Skip the tests directory |

semgrep --config p/default --jobs 2 | Limit parallel scanning jobs |

semgrep --config p/default --timeout 30 | Set per-rule timeout in seconds |

semgrep --config p/default --max-target-bytes 500000 | Skip files larger than 500KB |

semgrep --config p/default --max-memory 4000 | Cap memory usage at 4GB |

semgrep --exclude-rule "rule.id" | Exclude a specific rule from the scan |

semgrep ci | CI-optimized scan with diff-awareness |

Next steps after your first scan

Once you have Semgrep CLI running locally and producing findings, the natural progression is to deepen your configuration and integrate it into your team workflow:

- Add Semgrep to CI - follow the Semgrep GitHub Action guide to scan every pull request automatically

- Write custom rules - the Semgrep custom rules guide covers pattern syntax, metavariables, and testing

- Evaluate pricing options - the Semgrep pricing breakdown explains what you get at each tier

- Set up the full platform - the how to setup Semgrep guide covers Semgrep Cloud, PR comments, and policy management

- Compare with alternatives - if you want to evaluate other options, Semgrep alternatives covers SonarQube, Snyk Code, Checkmarx, and more

Semgrep CLI is a foundation that scales from a single developer running quick scans on a laptop to enterprise teams scanning millions of lines across hundreds of repositories. The key is to start simple with semgrep --config auto, build confidence in the findings, and expand your configuration as your team’s needs grow.

Frequently Asked Questions

How do I install Semgrep CLI on my machine?

The fastest way to install Semgrep CLI is with pip by running 'pip install semgrep'. On macOS you can also use Homebrew with 'brew install semgrep'. For containerized environments, pull the official Docker image with 'docker pull semgrep/semgrep' and mount your source directory into the container. After installation, verify everything works by running 'semgrep --version' in your terminal. Python 3.8 or later is required for the pip method.

What is the difference between semgrep scan and semgrep ci?

The 'semgrep scan' command runs a local scan against your codebase using rule sets you specify on the command line. The 'semgrep ci' command is designed for CI/CD pipelines and adds diff-aware scanning, automatic rule configuration from Semgrep Cloud policies, result uploading to the Semgrep dashboard, and PR comment integration. Use 'semgrep scan' for local development and 'semgrep ci' in your automated pipelines.

Which Semgrep rule set should I use for my first scan?

Start with 'semgrep --config auto' which automatically detects the languages and frameworks in your project and selects relevant rules. If you want more control, use 'semgrep --config p/default' which includes high-confidence security and correctness rules with low false positive rates. Avoid starting with broad sets like p/security-audit until you are comfortable triaging findings from the default set.

How do I get Semgrep output in JSON or SARIF format?

Use the --json flag to get JSON output: 'semgrep --config p/default --json > results.json'. For SARIF format, which is used by GitHub Code Scanning and other security platforms, use '--sarif': 'semgrep --config p/default --sarif > results.sarif'. Semgrep also supports JUnit XML with '--junit-xml' and Emacs-compatible output with '--emacs'. You can combine format flags with '--output filename' to write results to a file while still seeing terminal output.

How do I ignore a specific Semgrep finding in my code?

Add a nosemgrep comment on the line immediately before the flagged code. Use the format '# nosemgrep: rule-id' in Python, '// nosemgrep: rule-id' in JavaScript or Go, and the appropriate comment syntax for your language. Always include the specific rule ID rather than a blanket suppression so the intent is documented. For broader exclusions, add paths to a .semgrepignore file in your repository root.

Can I scan only specific files or directories with Semgrep?

Yes. Pass file or directory paths as arguments after the config flag: 'semgrep --config p/default src/api/ src/auth/'. You can also use '--include' and '--exclude' flags with glob patterns. For example, '--include "*.py"' scans only Python files and '--exclude "tests/"' skips the tests directory. These flags can be combined to precisely target the code you want to analyze.

How fast is Semgrep CLI compared to other SAST tools?

Semgrep is one of the fastest SAST tools available. Most scans complete in under 30 seconds for a typical codebase, and the median CI scan time is approximately 10 seconds because Semgrep supports diff-aware scanning that analyzes only changed files. This is significantly faster than tools like SonarQube, Checkmarx, or Veracode, which can take minutes to hours for comparable analysis. Semgrep achieves this speed by running pattern matching directly on the source code without requiring a full compilation or build step.

Does Semgrep CLI work on Windows?

Semgrep CLI does not run natively on Windows. The recommended approach for Windows users is to use Windows Subsystem for Linux (WSL) and install Semgrep via pip within the WSL environment. Alternatively, you can run Semgrep through Docker on Windows by using 'docker run semgrep/semgrep' with your source directory mounted as a volume. Both approaches provide the full Semgrep feature set on Windows machines.

What languages does Semgrep support for scanning?

Semgrep supports over 30 programming languages including Python, JavaScript, TypeScript, Java, Go, Ruby, C, C++, C#, Rust, Kotlin, Swift, PHP, Scala, Terraform, Dockerfile, and Kubernetes YAML. The open-source engine provides full support for all these languages. The Semgrep Pro engine, available through Semgrep Cloud, adds cross-file and cross-function dataflow analysis for a subset of these languages.

How do I speed up Semgrep scans on a large repository?

Several techniques help: use '--exclude' to skip directories like vendor/, node_modules/, and build artifacts. Set '--max-target-bytes 500000' to skip very large files. Use '--jobs N' to control parallelism and reduce memory pressure. Add a .semgrepignore file to permanently exclude paths that do not need scanning. In CI, use 'semgrep ci' which automatically performs diff-aware scanning to analyze only changed files instead of the full repository.

Is Semgrep CLI free to use?

Yes, Semgrep CLI is fully free and open source under the LGPL-2.1 license. It includes over 2,800 community-maintained rules covering security, correctness, and best practices. The Semgrep Cloud platform adds cross-file analysis, 20,000+ Pro rules, AI-powered triage, and a web dashboard - and it is free for teams of up to 10 contributors. Beyond 10 contributors, the Team plan costs $35 per contributor per month.

How do I integrate Semgrep CLI into my CI/CD pipeline?

The simplest approach is to add Semgrep to your CI workflow using the official Docker image. In GitHub Actions, create a workflow that runs 'semgrep ci' with a SEMGREP_APP_TOKEN secret for cloud integration, or 'semgrep scan --config p/default --error' for standalone scanning. The '--error' flag causes Semgrep to exit with a non-zero code when findings are detected, which fails the CI check. Semgrep also works with GitLab CI, Jenkins, CircleCI, Buildkite, and Azure Pipelines.

Explore More

Tool Reviews

Related Articles

- How to Write Custom Semgrep Rules: Complete Tutorial

- How to Set Up Semgrep GitHub Action for Code Scanning

- I Reviewed 32 SAST Tools - Here Are the Ones Actually Worth Using (2026)

- Best Free Snyk Alternatives for Vulnerability Scanning in 2026

- Free SonarQube Alternatives: Best Open Source Code Quality Tools in 2026

Free Newsletter

Stay ahead with AI dev tools

Weekly insights on AI code review, static analysis, and developer productivity. No spam, unsubscribe anytime.

Join developers getting weekly AI tool insights.

Related Articles

Codacy GitHub Integration: Complete Setup and Configuration Guide

Learn how to integrate Codacy with GitHub step by step. Covers GitHub App install, PR analysis, quality gates, coverage reports, and config.

March 13, 2026

how-toCodacy GitLab Integration: Setup and Configuration Guide (2026)

Set up Codacy with GitLab step by step. Covers OAuth, project import, MR analysis, quality gates, coverage reporting, and GitLab CI config.

March 13, 2026

how-toHow to Set Up Codacy with Jenkins for Automated Review

Set up Codacy with Jenkins for automated code review. Covers plugin setup, Jenkinsfile config, quality gates, coverage, and multibranch pipelines.

March 13, 2026

Semgrep Review

Semgrep Review

CodeAnt AI Review

CodeAnt AI Review