Best Code Review Tools for Python in 2026 - Linters, SAST, and AI

Best Python code review tools in 2026 - Ruff, Pylint, and AI reviewers. Covers type checking, security scanning, Django/Flask analysis, and CI/CD setup.

Published:

Last Updated:

Why Python needs specialized code review tools

Python is the most popular programming language in the world in 2026, powering everything from machine learning pipelines and data science notebooks to Django web applications and FastAPI microservices. But Python’s flexibility is also its biggest liability when it comes to code quality. The features that make Python productive for individual developers - dynamic typing, duck typing, implicit conversions, mutable defaults - create entire categories of bugs that do not exist in statically typed languages.

Consider a simple example that ships to production more often than anyone would like to admit:

def process_users(users: list[dict], active_only: bool = True):

results = []

for user in users:

if active_only and user["status"] == "active":

results.append({

"name": user["name"],

"email": user["email"],

"score": user["points"] / user["total_points"] # ZeroDivisionError

})

return resultsA generic code review tool might flag the missing docstring or suggest a list comprehension. A Python-specific tool will catch the ZeroDivisionError when total_points is zero, the KeyError when any of those dictionary keys are missing, and the fact that user["status"] could be None in a dynamically typed context. That is the difference between a tool that happens to support Python and a tool that understands Python.

Dynamic typing creates unique review challenges

In Java or Go, the compiler catches type mismatches before code even runs. In Python, a function that expects a list[str] will happily accept a list[int] at runtime - and may even work correctly in some cases while silently producing wrong results in others. Type annotations help, but they are not enforced by the interpreter. You need a separate type checker (mypy, pyright) to validate them, and those type checkers need to be integrated into your review workflow to provide value.

The problem goes deeper than basic type mismatches. Python’s protocol-based type system means that objects with the right method signatures are treated as compatible, even if their semantics differ. A StringIO object and a file handle both support .read(), but substituting one for the other in a function that calls .seek() later will fail silently until it does not. Tools that understand Python’s type system at a deep level catch these issues before they reach production.

Web framework security requires Python-aware analysis

Django and Flask are two of the most deployed web frameworks in production. Both have extensive security features - Django’s ORM prevents SQL injection by default, its template engine auto-escapes HTML, and its middleware handles CSRF protection. But these protections only work if developers use them correctly.

Here is a pattern that passes generic code review tools without a single warning:

# Django view - looks fine to a generic tool

def search_users(request):

query = request.GET.get("q", "")

# SQL injection: raw query with string formatting

users = User.objects.raw(f"SELECT * FROM users WHERE name LIKE '%{query}%'")

return render(request, "results.html", {"users": users})A generic static analysis tool sees a function that reads a query parameter and returns a rendered template. A Python-aware security scanner like Bandit or Semgrep with Django rules immediately flags the raw SQL with untrusted user input as a critical SQL injection vulnerability. It knows that request.GET.get() is a taint source and objects.raw() is a dangerous sink, and it traces the data flow between them.

Flask introduces different challenges. Unlike Django, Flask does not enable CSRF protection by default, does not auto-escape Jinja2 templates in all contexts, and does not provide a built-in ORM that prevents SQL injection. Python-specific security tools know which Flask extensions are installed and whether they are configured correctly - something a language-agnostic tool cannot evaluate.

The package ecosystem introduces supply chain risks

Python’s pip ecosystem contains over 500,000 packages on PyPI, and Python projects tend to have deep dependency trees. A typical Django project might pull in 80-120 transitive dependencies through its requirements.txt or pyproject.toml. Each of those dependencies is a potential attack vector - and unlike npm, pip does not have built-in audit capabilities.

Tools like Snyk and Semgrep scan your dependency manifest for known vulnerabilities, but the Python-specific challenge goes further. Python packages can execute arbitrary code during installation through setup.py, and typosquatting attacks on PyPI have been documented extensively. A comprehensive Python code review setup needs to cover not just your code, but the code you are importing.

Categories of Python code review tools

Before diving into specific tools, it helps to understand the different categories and how they complement each other. No single tool covers every dimension of Python code quality. The best setups layer tools from multiple categories.

Linters and formatters

These tools enforce coding standards, catch common errors, and ensure consistent style. For Python, this category includes Ruff (the modern standard), Pylint (the deep analyzer), flake8 (the legacy standard), Black (opinionated formatter), and isort (import sorting). Ruff has increasingly consolidated this category by reimplementing the rules of multiple tools in a single, extremely fast binary.

Type checkers

Python’s type annotation system (PEP 484 and later) is purely advisory unless you run a type checker. Mypy (the original, from Dropbox) and Pyright (from Microsoft, powers VS Code’s Pylance) are the two main options. They catch type mismatches, missing return types, incompatible overrides, and incorrect generic usage.

Security scanners (SAST)

Static Application Security Testing tools analyze code for vulnerabilities without executing it. For Python, this includes Bandit (Python-specific), Semgrep (multi-language with strong Python rules), Snyk Code (cross-file dataflow analysis), and SonarQube (broad coverage). Django and Flask projects benefit enormously from security scanners that understand framework-specific vulnerability patterns.

AI-powered code reviewers

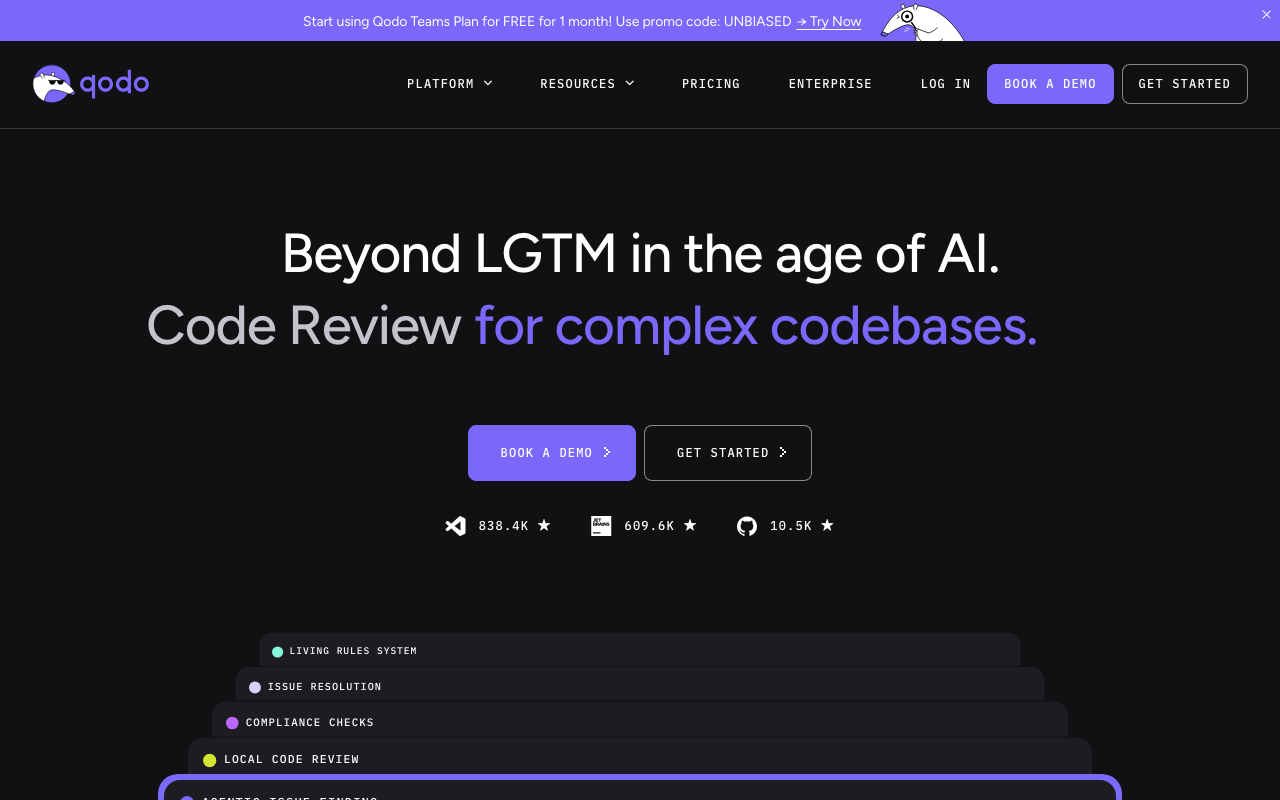

These tools use large language models to understand code semantics and provide review feedback that goes beyond rule matching. Sourcery (Python-first), CodeRabbit (multi-language with strong Python support), DeepSource (AI-enhanced static analysis), and Qodo (test generation and review) fall in this category.

Full code quality platforms

Platforms like SonarQube, Codacy, and DeepSource combine static analysis, security scanning, code coverage tracking, and sometimes AI review into a single tool. They provide dashboards, trend tracking, quality gates, and CI/CD integration across multiple dimensions.

Quick comparison: Python code review tools at a glance

| Tool | Category | Python-Specific | Free Tier | Price | Best For |

|---|---|---|---|---|---|

| Ruff | Linter + formatter | Yes (Python-only) | OSS | Free | Fast linting, replacing flake8/isort/Black |

| Pylint | Deep linter | Yes (Python-only) | OSS | Free | Deep analysis, custom plugins |

| mypy | Type checker | Yes (Python-only) | OSS | Free | Type safety in CI/CD |

| Pyright | Type checker | Yes (Python-only) | OSS | Free | IDE type checking (Pylance) |

| Bandit | Security scanner | Yes (Python-only) | OSS | Free | Python-specific vulnerability detection |

| Sourcery | AI code quality | Yes (Python-first) | OSS only | $10/user/mo | Python refactoring suggestions |

| CodeRabbit | AI PR review | Strong support | Unlimited | $24/user/mo | Overall AI review quality |

| DeepSource | Code quality + AI | Strong support | Individual | $24/user/mo | Low false positive analysis |

| SonarQube | Static analysis | 500+ Python rules | Community | ~$13/mo | Enterprise rule depth |

| Semgrep | Security scanning | Django/Flask rules | 10 devs | $35/dev/mo | Custom security rules |

| Snyk Code | Security SAST | Cross-file Python | Limited | $25/dev/mo | Full-stack security |

| Codacy | Code quality | Multi-tool Python | Yes | $15/user/mo | All-in-one for small teams |

| PR-Agent | AI PR review | Good support | Self-hosted | $30/user/mo | Self-hosted AI review |

| Qodo | AI review + tests | Good support | Yes | $30/user/mo | Python test generation |

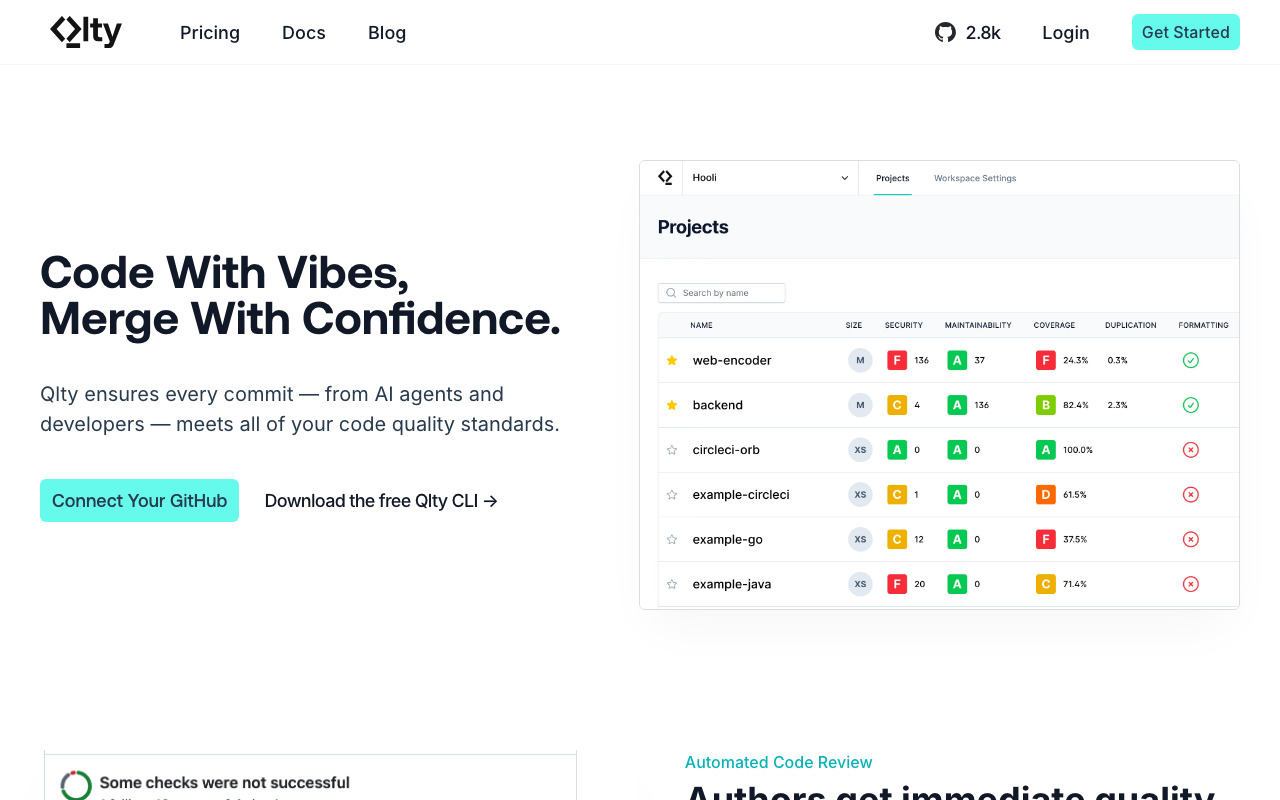

| Qlty | Code health | Multi-tool Python | CLI free | $15/dev/mo | Polyglot codebases |

Python-specific linters: Ruff, Pylint, and flake8

The linter is the foundation of any Python code review setup. It runs fastest, catches the most common issues, and integrates into every stage of the development workflow - from pre-commit hooks to CI pipelines to IDE extensions. Choosing the right linter matters because it determines the baseline quality of every line of code your team writes.

Ruff - The modern Python linter

Ruff has fundamentally changed the Python linting landscape since its initial release. Written in Rust, it is 10 to 100 times faster than Pylint and flake8, which means it can lint an entire large codebase in the time those tools take to lint a single file. But speed is only part of the story.

Ruff reimplements rules from over a dozen Python tools - flake8, isort, pycodestyle, pyflakes, pydocstyle, pyupgrade, autoflake, and more - in a single binary with a single configuration file. This means you can replace your entire linting toolchain with one tool:

# pyproject.toml - Ruff replaces flake8, isort, pyupgrade, and more

[tool.ruff]

target-version = "py312"

line-length = 120

[tool.ruff.lint]

select = [

"E", # pycodestyle errors

"W", # pycodestyle warnings

"F", # pyflakes

"I", # isort

"N", # pep8-naming

"UP", # pyupgrade

"B", # flake8-bugbear

"S", # flake8-bandit (security)

"A", # flake8-builtins

"C4", # flake8-comprehensions

"DTZ", # flake8-datetimez

"DJ", # flake8-django

"PT", # flake8-pytest-style

"SIM", # flake8-simplify

"RUF", # Ruff-specific rules

]

[tool.ruff.lint.per-file-ignores]

"tests/**/*.py" = ["S101"] # Allow assert in tests

"migrations/**/*.py" = ["E501"] # Allow long lines in migrations

[tool.ruff.format]

quote-style = "double"

indent-style = "space"That single configuration replaces what previously required separate config files for flake8, isort, Black, pyupgrade, and several flake8 plugins. Ruff covers over 800 lint rules as of early 2026 and adds new ones regularly.

Where Ruff excels for Python projects:

Ruff’s flake8-django ruleset (DJ) catches Django-specific issues like missing __str__ methods on models, incorrect Meta class options, and deprecated Django patterns. The flake8-bandit ruleset (S) covers common security issues like hardcoded passwords, use of eval(), and insecure hash algorithms. The flake8-pytest-style rules (PT) enforce consistent test patterns.

Ruff also handles formatting through ruff format, which is a near-drop-in replacement for Black. Running ruff check --fix && ruff format in your pre-commit hook gives you linting, auto-fixing, import sorting, and formatting in under a second for most projects.

Where Ruff falls short:

Ruff is a syntactic linter. It analyzes code as text and AST nodes, but it does not build a semantic model of your program. This means it cannot perform the kind of deep type inference that Pylint does, it cannot follow imports across modules to detect circular dependencies, and it cannot evaluate custom plugin logic. For most teams, this trade-off is worth it - Ruff covers 80%+ of common linting needs at dramatically better speed. But teams that need deep analysis should consider layering Pylint on top.

Pylint - The deep Python analyzer

Pylint has been the most thorough Python linter for over two decades, and it still performs analysis that no other tool replicates. Unlike Ruff, Pylint builds a full abstract syntax tree and performs type inference, which lets it catch issues like:

class UserService:

def __init__(self, db):

self.db = db

def get_user(self, user_id: int) -> dict:

user = self.db.query(User).filter_by(id=user_id).first()

return user.to_dict() # Pylint: "Instance of 'None' has no 'to_dict' member"Pylint understands that .first() on a SQLAlchemy query returns Optional[User], and that calling .to_dict() on None will raise an AttributeError. Ruff cannot catch this because it does not perform cross-expression type inference.

Pylint’s plugin system is another differentiator. The pylint-django plugin understands Django’s ORM, model fields, and view patterns. It knows that a CharField defined in a model will be available as a string attribute on instances, which prevents false positives about undefined attributes. The pylint-celery plugin catches common mistakes in async task definitions. Custom Pylint plugins can encode your team’s specific patterns and architectural constraints.

The speed problem:

Pylint’s thoroughness comes at a cost. On a 50,000-line codebase, Pylint might take 30-60 seconds to complete a full analysis, while Ruff finishes in under a second. This makes Pylint impractical for pre-commit hooks or rapid IDE feedback on large projects. The common solution is to run Ruff locally and in pre-commit hooks for fast feedback, then run Pylint in CI for the deep analysis that catches what Ruff misses.

# .pre-commit-config.yaml - Fast local, deep in CI

repos:

- repo: https://github.com/astral-sh/ruff-pre-commit

rev: v0.9.0

hooks:

- id: ruff

args: [--fix]

- id: ruff-format# .github/workflows/lint.yml - Deep analysis in CI

jobs:

lint:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- uses: actions/setup-python@v5

with:

python-version: "3.12"

- run: pip install pylint pylint-django

- run: pylint --load-plugins pylint_django src/flake8 - The legacy standard

flake8 is worth mentioning because it remains widely deployed, but for new projects in 2026, there is little reason to choose it over Ruff. Ruff implements all of flake8’s core rules plus the rules from most popular flake8 plugins, runs dramatically faster, and provides autofix for many issues that flake8 can only report.

Teams already invested in flake8 with custom plugins that have not been ported to Ruff may want to keep flake8 running alongside Ruff. But for the vast majority of Python projects, Ruff is the strictly better choice in this category.

Type checking tools: mypy and Pyright

Type checking is the second layer of Python code review. Linters catch style issues and common bugs; type checkers catch an entire class of errors that dynamic typing makes possible. If your codebase uses type annotations - and most professional Python codebases in 2026 do - a type checker is not optional.

mypy - The standard for CI/CD type checking

mypy was the first mainstream Python type checker, developed at Dropbox by Jukka Lehtosalo and later contributed to by Guido van Rossum himself. It remains the most widely integrated type checker in CI/CD pipelines and has the broadest ecosystem of third-party type stubs.

A properly configured mypy setup catches a remarkable number of bugs:

# mypy catches all of these

from typing import Optional

def calculate_discount(price: float, discount: Optional[float]) -> float:

return price * (1 - discount) # error: Unsupported operand types for * ("float" and "Optional[float]")

def process_items(items: list[str]) -> list[str]:

return [item.upper for item in items] # error: "Callable[[], str]" has no attribute "__iter__"

# (missing parentheses on .upper())

class Config:

debug: bool = False

timeout: int = 30

config = Config()

config.timeout = "60" # error: Incompatible types in assignment (expression has type "str", variable has type "int")mypy configuration for a production Python project typically looks like this:

# pyproject.toml

[tool.mypy]

python_version = "3.12"

strict = true

warn_return_any = true

warn_unused_configs = true

disallow_untyped_defs = true

disallow_incomplete_defs = true

check_untyped_defs = true

disallow_untyped_decorators = true

no_implicit_optional = true

warn_redundant_casts = true

warn_unused_ignores = true

warn_no_return = true

# Per-module overrides for third-party libraries without stubs

[[tool.mypy.overrides]]

module = ["celery.*", "redis.*"]

ignore_missing_imports = true

# Stricter settings for core business logic

[[tool.mypy.overrides]]

module = ["app.core.*", "app.domain.*"]

disallow_any_generics = true

disallow_any_unimported = truemypy with Django:

Django’s dynamic nature - model fields becoming instance attributes, queryset chaining, generic views - historically made mypy integration painful. The django-stubs package (maintained by the TypedDjango project) has solved most of these issues. It provides type stubs that make mypy understand Django models, views, forms, and the ORM:

# With django-stubs, mypy understands Django ORM types

from myapp.models import User

def get_active_users() -> list[User]:

# mypy knows .filter() returns a QuerySet[User]

# mypy knows .values_list() changes the return type

users = User.objects.filter(is_active=True)

return list(users)Pyright - The faster alternative

Pyright, developed by Microsoft, is the type checker that powers VS Code’s Pylance extension. It is significantly faster than mypy on large codebases - often 3 to 5 times faster - because it uses incremental analysis and is written in TypeScript with a focus on performance.

Pyright’s strict mode is also stricter than mypy’s strict mode. It catches patterns that mypy allows by default, such as implicit Any returns from untyped third-party libraries. This makes Pyright a good choice for teams that want maximum type safety, though it also means more initial configuration work to suppress false positives from libraries without complete type stubs.

The practical recommendation:

Many production Python teams use both tools. Pyright provides real-time feedback in VS Code through Pylance, giving developers immediate type error highlighting as they write code. mypy runs in CI/CD as the authoritative type check, because it has broader community adoption and more third-party stub packages. The two tools agree on the vast majority of checks, and where they disagree, the differences are usually edge cases around advanced generic types or protocol classes.

Platform tools with Python-specific capabilities

The tools above are all open-source and Python-specific. The following platform tools provide broader functionality - AI review, security scanning, quality tracking - with strong Python support as part of a multi-language offering.

Sourcery - Purpose-built AI for Python

Sourcery was originally built exclusively for Python and has expanded to support JavaScript, TypeScript, and Go. But Python remains its strongest language by a significant margin, and it is the only AI code review tool that was designed from the ground up for Python’s idioms and patterns.

What makes Sourcery Python-specific:

Sourcery understands Pythonic patterns at a deep level. It does not just flag issues - it suggests refactorings that transform correct-but-verbose code into idiomatic Python. Here are examples of the kinds of transformations Sourcery suggests:

# Before: Sourcery flags this as non-Pythonic

filtered_users = []

for user in users:

if user.is_active and user.age >= 18:

filtered_users.append(user.name)

# After: Sourcery suggests list comprehension

filtered_users = [

user.name for user in users

if user.is_active and user.age >= 18

]# Before: Sourcery detects unnecessary else after return

def get_status(code):

if code == 200:

return "ok"

else:

if code == 404:

return "not found"

else:

return "error"

# After: Sourcery suggests flat structure

def get_status(code):

if code == 200:

return "ok"

if code == 404:

return "not found"

return "error"These are not trivial style preferences. Pythonic code is genuinely easier to read, maintain, and review. Sourcery’s suggestions consistently move code toward the patterns that experienced Python developers would write from scratch.

Sourcery’s code quality metrics:

Beyond refactoring suggestions, Sourcery computes a quality score for every function in your codebase based on four dimensions: complexity (cyclomatic and cognitive), method length, working memory (number of variables a reader must track), and nesting depth. Functions scoring below thresholds are flagged in PR reviews with specific suggestions for improvement.

Pricing and limitations:

Sourcery’s free tier is limited to open-source projects. The Pro tier at $10/user/month unlocks private repos and custom coding guidelines, while the Team tier at $24/user/month adds security scanning and analytics. The biggest limitation is language support - if your codebase is primarily Python, Sourcery is excellent. If you have significant TypeScript, Go, or Java code alongside Python, you may prefer a multi-language tool like CodeRabbit or DeepSource and lose the depth of Python-specific suggestions.

CodeRabbit - Best overall AI review with strong Python support

CodeRabbit is the most widely installed AI code review tool on GitHub, and while it is not Python-specific, its Python support is among the best of any multi-language AI reviewer. CodeRabbit uses LLMs to understand code semantics, which means it can reason about Python code at a level that rule-based tools cannot reach.

Python-specific capabilities:

CodeRabbit’s strength with Python code comes from its ability to understand context across an entire pull request. It catches issues like:

- Missing error handling in async code - Flagging

awaitcalls without try/except in FastAPI endpoints where unhandled exceptions return 500 errors - Django ORM N+1 queries - Detecting loops that trigger individual database queries when

select_related()orprefetch_related()would be more efficient - Type annotation inconsistencies - Noticing when function signatures declare

Optional[str]but the implementation never handlesNone - Test coverage gaps - Pointing out that a new function with three code paths only has tests for two of them

You can tune CodeRabbit’s Python-specific behavior through its natural language configuration:

# .coderabbit.yaml - Python-specific instructions

reviews:

instructions:

- "For Python files, always check for proper exception handling in Django views"

- "Flag any use of mutable default arguments in function signatures"

- "Warn when Django model fields lack help_text or verbose_name"

- "Check that all FastAPI endpoints have proper response_model types"

- "Flag bare except clauses - always catch specific exception types"The free tier advantage:

CodeRabbit’s unlimited free tier for both public and private repos makes it the easiest AI review tool to adopt. A Python team can install it on their GitHub organization in under five minutes and start getting AI reviews on every pull request immediately. The Pro tier at $24/user/month adds custom review profiles and advanced configuration, but the free tier is genuinely useful for most teams.

Honest limitations for Python teams:

CodeRabbit does not replace Ruff, mypy, or Bandit. It catches a different class of issues - logic errors, missing edge cases, architectural concerns - but it does not enforce linting rules deterministically and it does not perform formal type checking. The best Python workflow uses CodeRabbit alongside dedicated Python tools rather than as a replacement for them.

DeepSource - Low false positives with Python autofix

DeepSource combines static analysis with AI-powered review and has one of the strongest Python analyzers among the multi-language platforms. It specifically markets a sub-5% false positive rate, which is a meaningful claim for Python where dynamic typing often causes static analysis tools to generate noise.

Python analysis depth:

DeepSource’s Python analyzer covers over 150 Python-specific issues across categories: anti-patterns, bug risks, performance, security, style, and type checking. Notable Python-specific checks include:

- Mutable default arguments - The classic Python gotcha where

def func(items=[])shares the list across calls - Late binding closures - Detecting closures in loops that capture the variable rather than the value

- Incorrect use of

__all__- Catching exports that reference names not defined in the module - Django-specific checks - Missing database indexes, unoptimized query patterns, insecure settings

- Pandas anti-patterns - Detecting iterative operations on DataFrames where vectorized operations exist

DeepSource’s Autofix feature is particularly strong for Python. When it detects an issue, it often generates a fix that can be applied directly from the pull request comment. For Python, these fixes are usually correct and Pythonic - they are not just mechanical transformations but reflect an understanding of Python idioms.

Integration with Python tooling:

DeepSource can run mypy, Ruff, and other Python tools as part of its analysis pipeline and surface their results in its unified dashboard. This means you can get Ruff linting, mypy type checking, and DeepSource’s own analysis all reported in one place on your pull requests, with consistent formatting and a single configuration file:

# .deepsource.toml

version = 1

[[analyzers]]

name = "python"

enabled = true

[analyzers.meta]

runtime_version = "3.x"

max_line_length = 120

type_checker = "mypy"

[[transformers]]

name = "ruff"

enabled = truePricing:

DeepSource offers a free tier for individual developers. The Team plan starts at $24/user/month. For Python teams that want a single platform covering linting, type checking, and AI review, DeepSource is one of the most integrated options available.

SonarQube - Enterprise Python analysis with 500+ rules

SonarQube has over 500 rules specifically for Python, making it one of the deepest rule-based analyzers for the language. For enterprise teams that need compliance reporting, quality gate enforcement, and long-term trend tracking, SonarQube’s Python support is comprehensive.

Python-specific rule categories:

SonarQube’s Python rules cover bug detection, code smells, security vulnerabilities, and security hotspots. Some of the more valuable Python-specific rules include:

- Cognitive complexity tracking - Measures how hard a function is to understand (not just how many branches it has) and flags functions that exceed configurable thresholds

- Django security rules - Detection of raw SQL queries, missing CSRF middleware, insecure session configuration, and improper use of

mark_safe() - Flask security rules - Detection of debug mode in production, missing secure headers, and improper secret key handling

- Dead code detection - Identification of unreachable code paths, unused imports, and variables that are assigned but never read

- Exception handling analysis - Flagging overly broad exception handlers, empty except blocks, and re-raising patterns that lose the original traceback

SonarQube’s type-aware analysis:

Unlike simpler linters that only look at syntax, SonarQube performs cross-file analysis on Python code. It follows import chains to understand which modules and classes are available, and it uses this information to detect issues like accessing attributes that do not exist on imported objects or calling functions with the wrong number of arguments.

# SonarQube catches cross-file issues

# file: utils.py

def calculate_tax(amount: float, rate: float) -> float:

return amount * rate

# file: views.py

from .utils import calculate_tax

def order_summary(request, order_id):

order = Order.objects.get(id=order_id)

# SonarQube: calculate_tax expects 2 arguments, 3 given

tax = calculate_tax(order.subtotal, order.tax_rate, order.state)Quality gates for Python teams:

SonarQube’s quality gate feature is particularly useful for Python projects with growing teams. You can set thresholds for metrics like code coverage, duplicated lines, new bugs, and new security vulnerabilities. Pull requests that do not meet these thresholds are blocked from merging, which provides a consistent quality baseline regardless of who reviewed the code:

Quality Gate: Python Production

- New Coverage: >= 80%

- New Duplicated Lines: <= 3%

- New Bugs: 0

- New Vulnerabilities: 0

- New Security Hotspots Reviewed: 100%

- New Cognitive Complexity: <= 15 per functionLimitations for Python teams:

SonarQube’s self-hosting requirement is its biggest barrier. The free Community Build lacks branch analysis and PR decoration, which makes it impractical for teams using pull request workflows. The Developer Edition (required for PR integration) requires a paid license and a maintained server. SonarQube Cloud eliminates hosting overhead but uses LOC-based pricing that can become expensive for large Python monorepos. Teams that want SonarQube-level rule depth without the hosting burden should consider Codacy or DeepSource as alternatives.

Semgrep - Custom security rules for Django and Flask

Semgrep is an open-source static analysis tool that excels at writing custom rules using a pattern-matching syntax that looks like the code it analyzes. For Python security scanning, Semgrep’s approach is uniquely powerful because you can write rules that understand Python-specific and framework-specific patterns.

Pre-built Python security rulesets:

Semgrep maintains official rulesets for Python, Django, and Flask that cover hundreds of vulnerability patterns:

# Running Semgrep with Python security rulesets

# semgrep --config p/python --config p/django --config p/flask

# Example rule: detecting SQL injection in Django

rules:

- id: django-raw-sql-injection

patterns:

- pattern: |

$MODEL.objects.raw($QUERY)

- pattern-not: |

$MODEL.objects.raw("...", [...])

message: "Potential SQL injection via Django raw query without parameterization"

languages: [python]

severity: ERROR

metadata:

cwe: "CWE-89: SQL Injection"

owasp: "A03:2021 Injection"The Django ruleset covers SQL injection through the ORM, XSS through mark_safe() and |safe template filters, CSRF bypass patterns, insecure file upload handling, and authentication weaknesses. The Flask ruleset covers similar categories plus Flask-specific concerns like debug mode in production, unsafe deserialization, and missing security headers.

Writing custom Python rules:

Semgrep’s pattern syntax makes it straightforward to encode your team’s specific Python security and quality requirements:

# Custom rule: prevent environment variable access without defaults

rules:

- id: require-env-default

pattern: os.environ[$KEY]

fix: os.environ.get($KEY, "")

message: "Use os.environ.get() with a default value instead of direct access"

languages: [python]

severity: WARNING

- id: no-pickle-loads

pattern: pickle.loads(...)

message: "pickle.loads() is unsafe with untrusted data. Use json.loads() instead"

languages: [python]

severity: ERROR

- id: django-no-wildcard-import

pattern: from django.conf.settings import *

message: "Wildcard imports from Django settings make dependencies unclear"

languages: [python]

severity: WARNINGSemgrep’s Python dataflow analysis:

Beyond pattern matching, Semgrep supports taint tracking for Python. It can follow data from sources (like request.GET, request.POST, or request.FILES in Django) through intermediate variables and function calls to sinks (like cursor.execute(), subprocess.run(), or eval()). This catches vulnerabilities that simple pattern matching misses - cases where untrusted input is stored in a variable, passed through several functions, and eventually reaches a dangerous operation.

Pricing:

Semgrep’s OSS engine is free and can be run locally or in CI/CD. Semgrep Cloud (managed service with dashboard, policy management, and team features) is free for up to 10 contributors and $35/contributor/month after that. For most Python teams, the OSS engine running in GitHub Actions is sufficient for security scanning.

Snyk Code - Cross-file Python security analysis

Snyk Code is the SAST component of Snyk’s security platform. Its primary differentiator for Python teams is cross-file dataflow analysis that traces data through imports, function calls, and class hierarchies across your entire codebase - not just individual files.

Python-specific dataflow analysis:

Snyk Code builds a semantic model of your Python application and uses it to detect vulnerabilities that span multiple files. Consider a common Django pattern:

# file: views.py

def create_report(request):

data = request.POST.get("report_data")

report = ReportService.generate(data)

return JsonResponse(report)

# file: services.py

class ReportService:

@staticmethod

def generate(data):

# Several layers of indirection

parsed = ReportParser.parse(data)

return ReportBuilder.build(parsed)

# file: builders.py

class ReportBuilder:

@staticmethod

def build(parsed_data):

query = f"SELECT * FROM reports WHERE data = '{parsed_data}'"

# SQL injection - but the taint source is 3 files away

return db.execute(query)A single-file scanner would not flag builders.py because it cannot see that parsed_data originates from request.POST. Snyk Code traces the data flow across all three files and flags the SQL injection with the full path from source to sink.

Python dependency scanning:

Snyk’s broader platform also covers dependency vulnerabilities in requirements.txt, Pipfile.lock, poetry.lock, and pyproject.toml. This means a single Snyk installation covers both your code and your dependencies. For Python teams concerned about supply chain security, this combination is particularly valuable - you get SAST and SCA (Software Composition Analysis) from one tool.

Integration with Python CI/CD:

Snyk Code integrates with GitHub, GitLab, Bitbucket, and Azure DevOps for PR-level scanning. It also provides a CLI (snyk code test) that can run in any CI pipeline and a VS Code extension for real-time feedback during development. The Python-specific analysis typically completes in 1 to 3 minutes for medium-sized codebases.

Pricing:

Snyk offers a free tier with limited scans for a single user. The Team plan at $25/developer/month includes Snyk Code (SAST), Snyk Open Source (SCA), and basic container scanning. For Python teams that need both code security and dependency security, Snyk’s bundled offering is cost-effective compared to running separate tools.

Codacy - All-in-one Python quality for small teams

Codacy is a code quality platform that aggregates results from multiple Python tools into a unified dashboard. Rather than building its own Python analyzer from scratch, Codacy runs established Python tools - Pylint, Bandit, Prospector, and others - and surfaces their results alongside its own analysis.

Python tool aggregation:

Codacy’s approach means you get the combined coverage of multiple Python-specific tools without configuring each one individually. For a Python project, Codacy typically runs:

- Pylint for code quality and style enforcement

- Bandit for security vulnerability detection

- Prospector for additional Python-specific checks

- Radon for cyclomatic complexity and maintainability index

- Custom pattern matching for common Python anti-patterns

The results are deduplicated, organized by severity, and presented in Codacy’s dashboard alongside metrics like code coverage trends, duplication percentages, and technical debt estimates.

Django and Flask support:

Codacy understands Django and Flask project structures. It knows to apply different rule sets to models.py, views.py, urls.py, settings.py, and test files. Security rules are applied more aggressively to view functions and API endpoints, while complexity thresholds may be relaxed for Django migrations (which are auto-generated and inherently verbose).

Pricing advantage:

At $15/user/month, Codacy is the most affordable full-featured code quality platform for Python teams. The free tier supports limited repositories and is sufficient for small open-source projects. For teams that want a single platform covering linting, security, complexity, and coverage tracking without the operational overhead of SonarQube, Codacy is a strong value proposition.

Limitations:

Codacy’s Python analysis depth is limited by the underlying tools it runs. It does not perform the cross-file dataflow analysis that Snyk Code or Semgrep offer, and its AI review capabilities are more basic than tools like CodeRabbit or Sourcery. For teams that need deep security scanning or sophisticated AI review, Codacy works best as a baseline quality platform supplemented by a specialized tool.

PR-Agent (Qodo Merge) - Self-hosted AI review for Python

PR-Agent is an open-source AI code review tool created by Qodo (formerly CodiumAI) that you can self-host. For Python teams in regulated industries or organizations with strict data handling requirements, PR-Agent provides AI review capabilities without sending code to third-party servers.

Python-specific review quality:

PR-Agent uses LLMs (configurable - GPT-4, Claude, or self-hosted models) to review pull requests. For Python code, it provides:

- Automatic PR descriptions - Generates a summary of changes with a focus on what the Python code does, not just what files changed

- Code suggestions - Python-specific refactoring suggestions including Pythonic patterns, error handling improvements, and performance optimizations

- Review comments - Inline comments flagging potential bugs, security issues, and maintainability concerns

- Test suggestions - Identifies untested code paths and suggests test cases

The self-hosted option means you run PR-Agent on your own infrastructure, connecting it to your own LLM API keys. This keeps your Python source code within your network - a critical requirement for teams working with proprietary algorithms or sensitive data.

Configuration for Python projects:

# .pr_agent.toml

[pr_description]

enable_semantic_files_types = true

[pr_reviewer]

extra_instructions = """

For Python files:

- Check for proper type annotations on all public functions

- Flag any use of mutable default arguments

- Verify Django views have proper permission decorators

- Check that FastAPI endpoints define response models

"""Pricing:

PR-Agent’s open-source version is free to self-host. The managed version (Qodo Merge) costs $30/user/month and eliminates the need to maintain your own deployment. For Python teams that want AI review with full control over data handling, PR-Agent is the only serious open-source option.

Qodo - AI test generation for Python

Qodo (formerly CodiumAI) focuses on a specific dimension of code review that other tools largely ignore: test quality. For Python teams, where achieving meaningful test coverage requires understanding dynamic types, mock patterns, and framework-specific test utilities, Qodo’s AI-generated tests are genuinely useful.

Python test generation:

Qodo analyzes your Python functions and generates pytest test cases that cover edge cases a human reviewer might miss:

# Given this function:

def parse_config(filepath: str, required_keys: list[str]) -> dict:

with open(filepath) as f:

config = json.load(f)

missing = [k for k in required_keys if k not in config]

if missing:

raise ValueError(f"Missing required keys: {missing}")

return config

# Qodo generates tests like:

def test_parse_config_valid():

"""Test with a valid config file containing all required keys."""

...

def test_parse_config_missing_keys():

"""Test that ValueError is raised when required keys are absent."""

...

def test_parse_config_file_not_found():

"""Test that FileNotFoundError is raised for nonexistent file."""

...

def test_parse_config_invalid_json():

"""Test that JSONDecodeError is raised for malformed JSON."""

...

def test_parse_config_empty_required_keys():

"""Test with an empty required_keys list returns any valid config."""

...For Django projects, Qodo understands Django’s test client, factory patterns, and model fixtures. It generates tests that use TestCase, RequestFactory, and proper database setup/teardown patterns rather than generic unittest boilerplate.

Integration with code review:

Qodo integrates with GitHub and GitLab to provide test suggestions directly on pull requests. When a new Python function is added without tests, Qodo can automatically suggest test cases in the PR comments. This shifts the test coverage conversation from “did you write tests?” to “here are the tests you should add,” which is a more productive review interaction.

Pricing:

Qodo offers a free tier with basic functionality. The Teams plan at $30/user/month includes full test generation, PR review, and IDE integration. For Python teams where test coverage is a persistent challenge, Qodo addresses the root cause - making tests easy to write - rather than just measuring the symptom.

Qlty - Unified code health with Python linter aggregation

Qlty takes a different approach to Python code quality. Instead of building its own analyzer, Qlty provides a unified runtime that orchestrates existing Python tools and presents their results through a consistent interface. It supports over 40 languages, which makes it particularly useful for teams with polyglot codebases that include Python alongside JavaScript, Go, or Rust.

Python tool orchestration:

Qlty can run Ruff, Pylint, mypy, Bandit, and other Python tools as plugins, managing their installation, configuration, and output formatting. The advantage is a single qlty.toml configuration file and a single CLI command that runs your entire Python quality stack:

# qlty.toml

[plugins.ruff]

enabled = true

[plugins.mypy]

enabled = true

[plugins.bandit]

enabled = trueRunning qlty check executes all three tools in parallel and presents a unified output with consistent severity levels and formatting. This is particularly valuable in CI/CD where you want a single check that covers linting, type checking, and security scanning.

Code health metrics:

Qlty tracks code health metrics over time, including complexity trends, duplication percentages, and test coverage changes. For Python projects, this historical view helps teams identify quality trends - whether newly merged code is improving or degrading the overall codebase health.

Pricing:

Qlty’s CLI is free for commercial use. The Cloud platform (dashboard, PR integration, team features) starts at $15/contributor/month. For Python teams already using multiple tools and looking for a unified way to run and report on them, Qlty reduces configuration overhead without replacing the underlying tools.

Security scanning for Python: a deeper look

Security deserves its own section because Python’s role in web applications (Django, Flask, FastAPI), data pipelines (pandas, Apache Airflow), and machine learning (PyTorch, TensorFlow) makes it a high-value target. Each domain has unique vulnerability patterns that require specialized detection.

Bandit - Python-specific security analysis

Bandit is the standard open-source security scanner for Python. Developed by the OpenStack Security Project, it checks for common security issues in Python code using a set of plugins that each test for a specific vulnerability pattern.

Bandit catches issues like:

import subprocess

import pickle

import hashlib

# B603: subprocess call with shell=True

subprocess.call(user_input, shell=True)

# B301: pickle usage (deserialization of untrusted data)

data = pickle.loads(untrusted_bytes)

# B303: use of insecure MD5 hash

password_hash = hashlib.md5(password.encode()).hexdigest()

# B105: hardcoded password

DB_PASSWORD = "super_secret_123"

# B608: SQL injection via string formatting

query = "SELECT * FROM users WHERE name = '%s'" % user_name

cursor.execute(query)Bandit integrates with CI/CD pipelines trivially:

# GitHub Actions

- name: Security scan

run: |

pip install bandit

bandit -r src/ -f json -o bandit-results.jsonThe key limitation of Bandit is that it only analyzes single files. It cannot trace data flow across modules or understand framework-specific contexts. This is where tools like Semgrep and Snyk Code add value on top of Bandit’s baseline coverage.

Django-specific security concerns

Django applications face a specific set of vulnerabilities that require Python-aware analysis:

Raw SQL and ORM bypass: Django’s ORM prevents SQL injection by default, but developers sometimes bypass it with raw(), extra(), or cursor.execute() for complex queries. Every one of these bypass points needs to be checked for proper parameterization.

Template injection: Django’s template engine auto-escapes HTML output, but mark_safe(), the |safe filter, and {% autoescape off %} blocks disable this protection. A security scanner needs to verify that any content marked as safe does not include user input.

CSRF bypass patterns: Django’s CSRF middleware protects POST requests, but developers sometimes add @csrf_exempt decorators to views that handle API callbacks or webhook endpoints. These exemptions need review to ensure they are genuinely necessary and that alternative protections are in place.

Insecure settings in production: DEBUG = True, ALLOWED_HOSTS = ['*'], weak SECRET_KEY values, and missing security middleware are common Django misconfigurations that are easy to scan for but costly if missed.

Semgrep’s p/django ruleset covers all of these patterns and more. For teams that want maximum Django security coverage, running Semgrep with the Django ruleset alongside Bandit provides comprehensive protection:

# Combined Django security scanning

semgrep --config p/django --config p/python src/

bandit -r src/ -ll # Only medium and high severityFlask-specific security concerns

Flask’s “batteries not included” philosophy means security features that Django provides by default must be explicitly added:

No default CSRF protection: Flask applications need Flask-WTF or similar extensions for CSRF protection. A security scanner should verify that CSRF protection is installed and enabled on all state-changing endpoints.

No default XSS protection in all contexts: Jinja2 auto-escapes HTML in templates, but not in JavaScript contexts, CSS contexts, or URL attributes. A common Flask vulnerability is inserting user input into an onclick handler or a <script> block where HTML escaping is insufficient.

Debug mode exposure: Flask’s debug mode includes an interactive debugger that allows arbitrary code execution. A security scanner should verify that app.debug is False and app.run(debug=True) is not present in production code.

Secret key management: Flask’s session signing depends on app.secret_key. Hardcoded or weak secret keys compromise session integrity.

FastAPI security considerations

FastAPI has gained enormous adoption for Python APIs and introduces its own security patterns:

Input validation through Pydantic: FastAPI uses Pydantic models for automatic request validation, which prevents many injection attacks. However, endpoints that accept raw Request objects or use Body(embed=True) bypass this validation. Security scanning should flag endpoints that do not use Pydantic models.

Dependency injection security: FastAPI’s dependency injection system is powerful but can be misused. Dependencies that access databases or external services should include proper error handling and timeout configuration.

CORS misconfiguration: FastAPI’s CORSMiddleware is commonly misconfigured with allow_origins=["*"] during development and left that way in production. Security scanners should flag overly permissive CORS settings.

Building your Python code review stack

No single tool covers every dimension of Python code quality. The most effective Python code review setups layer tools from different categories, each handling what it does best. Here are recommended stacks for different team sizes and needs.

Minimal stack (solo developer or small team)

For a solo developer or a team of 2 to 5 people working on a single Python project, this stack provides strong coverage with minimal configuration:

Ruff for linting and formatting - Handles all syntactic issues, import sorting, and code formatting in a single tool. Configure it in pyproject.toml and add it to pre-commit hooks.

mypy for type checking - Add type annotations incrementally and run mypy in CI to catch type errors before they merge.

CodeRabbit for AI review - The free tier provides unlimited AI reviews on every pull request, catching logic errors and suggesting improvements that linters miss.

# .github/workflows/python-quality.yml

name: Python Quality

on: [pull_request]

jobs:

quality:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- uses: actions/setup-python@v5

with:

python-version: "3.12"

- run: pip install ruff mypy

- name: Ruff lint

run: ruff check src/

- name: Ruff format check

run: ruff format --check src/

- name: mypy type check

run: mypy src/

# CodeRabbit runs automatically via GitHub App - no CI config neededTotal cost: $0 (all tools are free at this scale).

Standard stack (mid-size team, 5-20 developers)

For a team of 5 to 20 developers working on one or more Python services, add security scanning and deeper analysis:

Ruff for linting and formatting (pre-commit and CI).

mypy for type checking (CI).

Pylint for deep analysis (CI only - too slow for pre-commit on large codebases).

Bandit + Semgrep for security scanning. Run Bandit for Python-specific vulnerabilities and Semgrep with the Django or Flask ruleset for framework-specific issues.

CodeRabbit or Sourcery for AI review. Choose CodeRabbit for multi-language teams or Sourcery for Python-heavy teams that want the deepest Python-specific refactoring suggestions.

# .github/workflows/python-quality.yml

name: Python Quality

on: [pull_request]

jobs:

lint:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- uses: actions/setup-python@v5

with:

python-version: "3.12"

- run: pip install ruff mypy pylint pylint-django bandit

- run: ruff check src/

- run: ruff format --check src/

- run: mypy src/

- run: pylint --load-plugins pylint_django src/

- run: bandit -r src/ -ll -f json -o bandit-report.json

security:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- uses: returntocorp/semgrep-action@v1

with:

config: >-

p/python

p/django

p/flaskTotal cost: $0-$24/user/month depending on AI review tool choice.

Enterprise stack (20+ developers, compliance requirements)

For larger teams with compliance needs, quality gate enforcement, and multiple Python services:

Ruff for linting and formatting (pre-commit and CI).

mypy for type checking (CI).

SonarQube or DeepSource for centralized quality tracking with quality gates, trend analysis, and compliance reporting. SonarQube offers the deepest rule set; DeepSource offers lower false positives and better AI integration.

Semgrep or Snyk Code for security. Semgrep for teams that want custom rule authoring and open-source flexibility; Snyk Code for teams that want cross-file dataflow analysis and combined SAST/SCA.

CodeRabbit for AI review at scale. The enterprise tier provides SSO, self-hosted deployment options, and custom review profiles.

Codacy or Qlty for unified quality metrics across all Python services, if the team needs a single dashboard covering multiple repositories.

# Enterprise CI pipeline - comprehensive Python analysis

name: Python Quality Gate

on: [pull_request]

jobs:

lint-and-type:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- uses: actions/setup-python@v5

with:

python-version: "3.12"

- run: pip install ruff mypy django-stubs

- run: ruff check src/ --output-format=github

- run: ruff format --check src/

- run: mypy src/ --junit-xml=mypy-results.xml

sonarqube:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

with:

fetch-depth: 0

- uses: SonarSource/sonarqube-scan-action@v5

env:

SONAR_TOKEN: ${{ secrets.SONAR_TOKEN }}

SONAR_HOST_URL: ${{ secrets.SONAR_HOST_URL }}

security:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- name: Snyk Code test

uses: snyk/actions/code@master

env:

SNYK_TOKEN: ${{ secrets.SNYK_TOKEN }}

- name: Snyk dependency test

uses: snyk/actions/python@master

env:

SNYK_TOKEN: ${{ secrets.SNYK_TOKEN }}Total cost: Varies significantly based on tool selection and team size. Budget $20-$60/developer/month for the platform tools (SonarQube/DeepSource + Snyk/Semgrep + CodeRabbit).

Data science and ML stack

Python data science and machine learning codebases have unique quality concerns - notebook code quality, pandas performance patterns, model serialization security, and experiment reproducibility. A tailored stack addresses these:

Ruff for linting with the NPY (NumPy) and PD (pandas) rule sets enabled, which catch common pandas anti-patterns like using .iterrows() when vectorized operations exist.

mypy with numpy and pandas-stubs for type checking data science code. Type annotations on DataFrame columns and NumPy array shapes catch shape mismatch errors before runtime.

Bandit with extra emphasis on pickle and YAML deserialization rules - ML pipelines frequently load serialized models and config files, both of which are common attack vectors.

DeepSource or CodeRabbit for AI review. Both are effective at reviewing data processing logic and flagging potential issues in transformation pipelines.

# pyproject.toml - Data science Ruff configuration

[tool.ruff.lint]

select = [

"E", "W", "F", "I", "N", "UP", "B", "S",

"NPY", # NumPy-specific rules

"PD", # pandas-vet rules

"PT", # pytest style

"SIM", # simplify

"RUF", # Ruff-specific

]Integrating Python code review into your workflow

Having the right tools is only half the equation. How you integrate them into your workflow determines whether they actually improve code quality or just add friction.

Pre-commit hooks for instant feedback

The fastest feedback loop is a pre-commit hook that runs before code even reaches a pull request. For Python, the ideal pre-commit configuration runs Ruff for linting and formatting, which completes in under a second for most changesets:

# .pre-commit-config.yaml

repos:

- repo: https://github.com/astral-sh/ruff-pre-commit

rev: v0.9.0

hooks:

- id: ruff

args: [--fix]

- id: ruff-format

- repo: https://github.com/pre-commit/mirrors-mypy

rev: v1.14.0

hooks:

- id: mypy

additional_dependencies: [django-stubs, types-requests]The key principle is that pre-commit hooks should be fast. If a hook takes more than 5 seconds, developers will bypass it with --no-verify. Ruff and ruff format meet this bar easily. mypy in pre-commit can be slow on large codebases - consider running it only on changed files or moving it to CI only.

CI/CD for comprehensive analysis

CI is where you run the slower, deeper analysis tools that would be too slow for pre-commit. Pylint, Bandit, Semgrep, and platform tools like SonarQube or DeepSource should all run in CI on every pull request.

Structure your CI pipeline so that fast checks run first and slow checks run in parallel:

- Fast (< 30 seconds): Ruff lint, Ruff format check

- Medium (30 seconds to 2 minutes): mypy, Bandit

- Slow (2+ minutes): Pylint, Semgrep, SonarQube scan, Snyk Code test

- Async (runs in background): CodeRabbit/Sourcery AI review (triggered by PR event, runs independently)

This structure ensures developers get fast feedback on style and formatting issues within seconds, while deeper analysis runs in parallel without blocking the quick results.

Configuring tools to work together without overlap

A common mistake is running multiple tools that cover the same rules, resulting in duplicate findings. Here is how to minimize overlap:

Ruff replaces flake8, isort, pycodestyle, and pyflakes. If you adopt Ruff, remove these tools entirely.

Ruff partially overlaps with Bandit. Ruff includes the flake8-bandit ruleset (S prefix), which covers the most common Bandit checks. If you run both, either disable the S rules in Ruff or accept the small amount of duplication.

mypy and Pylint overlap on type-related checks. Pylint performs basic type inference, but mypy is more thorough. If you run both, configure Pylint to skip its type checking categories and focus on code quality checks that mypy does not cover.

SonarQube/DeepSource/Codacy overlap with standalone linters. If you adopt a platform tool, you can often disable the standalone linters it wraps (since the platform runs them internally). Check the platform’s documentation for which tools it includes.

# Pylint configuration when used alongside mypy and Ruff

# pyproject.toml

[tool.pylint.messages_control]

# Disable checks that Ruff handles better/faster

disable = [

"C0114", # missing-module-docstring (use Ruff's D rules)

"C0115", # missing-class-docstring

"C0116", # missing-function-docstring

"C0301", # line-too-long (Ruff handles this)

"C0303", # trailing-whitespace (Ruff handles this)

"W0611", # unused-import (Ruff handles this)

"W0612", # unused-variable (Ruff handles this)

]How we evaluated these tools

To provide accurate recommendations, we evaluated each tool against Python-specific criteria that matter in real production environments.

Python version support: Does the tool support Python 3.12+ features including the newer type syntax (type X = ...), pattern matching (match/case), and exception groups? Tools that lag behind Python releases create friction for teams that want to use the latest language features.

Framework awareness: Does the tool understand Django, Flask, and FastAPI patterns? A tool that flags Django’s Meta class as unused or does not recognize Flask’s @app.route decorator as a public API produces noise that erodes developer trust.

Type annotation support: Does the tool leverage type annotations for deeper analysis? Python’s type system has evolved rapidly (PEP 604 union syntax, PEP 612 ParamSpec, PEP 695 type alias syntax), and tools that understand modern annotations provide better results.

False positive rate on Python code: Dynamic typing and Python’s metaprogramming features (descriptors, metaclasses, __getattr__) cause many static analysis tools to produce false positives. We prioritized tools with demonstrably low false positive rates on real Python code.

CI/CD integration: Can the tool run in GitHub Actions, GitLab CI, and other common CI systems? Does it provide structured output (JSON, SARIF) for integration with other tools? Can it be configured as a required check that blocks merging?

Performance on Python codebases: How long does the tool take to analyze a 50,000-line Python project? Tools that take more than 5 minutes to complete frustrate developers and slow down the PR review cycle.

Conclusion

Python’s dynamic nature, rich framework ecosystem, and prevalence in security-sensitive domains - web applications, data pipelines, machine learning infrastructure - make it a language that benefits enormously from layered code review tooling. No single tool covers every dimension of Python code quality, but the right combination provides coverage that far exceeds what human reviewers can achieve alone.

For linting, Ruff has become the clear standard. It is faster, covers more rules, and requires less configuration than any alternative. Teams still using flake8 should migrate - the effort is minimal and the speed improvement is dramatic.

For type checking, mypy remains the standard for CI/CD, while Pyright through Pylance provides the best IDE experience. The two tools complement each other, and most Python teams benefit from running both.

For security scanning, the choice depends on your depth requirements. Bandit provides a strong free baseline for Python-specific vulnerabilities. Semgrep adds framework-specific rules for Django and Flask plus custom rule authoring. Snyk Code adds cross-file dataflow analysis that catches vulnerabilities spanning multiple modules.

For AI-powered review, Sourcery provides the deepest Python-specific analysis with refactoring suggestions that teach Pythonic patterns. CodeRabbit provides the best overall AI review quality with a generous free tier. DeepSource combines AI with static analysis for the lowest false positive rate.

For full quality platforms, SonarQube offers the deepest rule set and quality gate enforcement for enterprise teams. Codacy offers the best value for small to mid-size teams. Qlty offers the best unified experience for polyglot codebases that include Python alongside other languages.

Start with the minimal stack - Ruff, mypy, and a free AI reviewer - and add tools as your team and codebase grow. The goal is not to run every tool on this list. The goal is to build a code review workflow where Python-specific bugs, security vulnerabilities, and quality issues are caught automatically, freeing your human reviewers to focus on architecture, business logic, and the concerns that only humans can evaluate.

Frequently Asked Questions

What is the best Python linter in 2026?

Ruff has become the dominant Python linter - 10-100x faster than Pylint/flake8, covers 800+ rules from multiple tools, and handles formatting too. For teams starting fresh, Ruff is the clear choice. Pylint still offers deeper analysis for teams already invested in it.

What is the best AI code review tool for Python?

Sourcery is purpose-built for Python and provides the most Python-specific suggestions. CodeRabbit offers the best overall AI review with strong Python support. DeepSource combines AI analysis with autofix specifically tuned for Python patterns.

Is Pylint still worth using?

Pylint remains the deepest Python-specific linter with checks that Ruff cannot replicate, like complex type inference and custom plugin support. However, Ruff covers 80%+ of common linting needs at dramatically faster speeds. Many teams use Ruff for speed and add Pylint in CI for deep analysis.

What tools detect Python security vulnerabilities?

Bandit (free, Python-specific), Semgrep (free OSS with Python rules), Snyk Code (cross-file dataflow analysis), and SonarQube (broad coverage with Python-specific rules). For Django/Flask-specific security, Semgrep has dedicated rulesets covering injection, CSRF, and authentication issues.

Should I use mypy or pyright for Python type checking?

Mypy is the established standard with broad ecosystem support. Pyright (from Microsoft) is faster and used by Pylance in VS Code. For CI/CD, mypy is more commonly integrated. For IDE experience, Pyright through Pylance is superior. Many teams use both - Pyright in the IDE and mypy in CI.

How do I set up automated code review for a Python project?

Start with Ruff for linting and formatting (replaces flake8, isort, black). Add mypy for type checking. Then add an AI review tool like CodeRabbit or Sourcery for logic-level analysis. Finally, add Semgrep or Bandit for security scanning. All can run in GitHub Actions or GitLab CI.

Is Ruff a replacement for flake8 and Black?

Yes. Ruff reimplements the rules of flake8, isort, Black, pyupgrade, and over a dozen other Python tools in a single Rust-based binary. It covers 800+ lint rules and includes a Prettier-like formatter. For new Python projects in 2026, Ruff is the recommended default that replaces the entire legacy linting toolchain.

How much do Python code review tools cost?

Many Python code review tools are completely free. Ruff, Pylint, mypy, Pyright, and Bandit are all open source. CodeRabbit offers unlimited free AI reviews on public and private repos. Paid tools like Sourcery ($10/user/month), DeepSource ($24/user/month), and Semgrep ($35/dev/month for 10+ contributors) add deeper analysis and team features.

What is the best code review tool for Django projects?

For Django specifically, combine Semgrep with the p/django ruleset for security scanning (SQL injection, CSRF, XSS), Pylint with pylint-django for deep code analysis, and CodeRabbit or Sourcery for AI-powered logic review. Ruff's flake8-django rules (DJ prefix) also catch Django-specific issues like missing model __str__ methods.

Can AI code review tools catch Python security vulnerabilities?

Yes, but they work best combined with dedicated security scanners. AI tools like CodeRabbit and Sourcery catch logic-level security issues such as missing input validation and improper error handling. Dedicated SAST tools like Bandit, Semgrep, and Snyk Code catch specific vulnerability patterns like SQL injection, hardcoded secrets, and insecure deserialization with near-zero false positives.

What is the difference between Ruff and Pylint?

Ruff is a fast syntactic linter that analyzes code as text and AST nodes, covering 800+ rules at 10-100x the speed of Pylint. Pylint performs deeper semantic analysis including type inference and cross-expression evaluation. Many teams use both - Ruff in pre-commit hooks for instant feedback and Pylint in CI for the deep analysis that Ruff cannot replicate.

How do I reduce false positives from Python static analysis tools?

Choose tools with low false positive rates like DeepSource (sub-5% false positive rate). Configure per-file ignores for generated code, migrations, and test files. Use baseline features to suppress existing issues and only enforce rules on new code. Disable rules that consistently produce unhelpful findings for your specific codebase.

What Python code review tools work with FastAPI?

CodeRabbit can review FastAPI endpoints for missing response models, improper error handling, and async patterns. Semgrep has rules for FastAPI security issues including CORS misconfiguration and input validation bypass. Ruff catches general Python issues in FastAPI code, and mypy with Pydantic plugin validates type annotations used in FastAPI schemas.

Do Python code review tools support GitHub Actions?

Yes, virtually all Python code review tools integrate with GitHub Actions. Ruff, mypy, Pylint, and Bandit run as standard CLI commands in workflow steps. CodeRabbit and Sourcery install as GitHub Apps that trigger automatically on pull requests. Semgrep and SonarQube provide official GitHub Actions. DeepSource and Codacy also offer native GitHub integration.

Explore More

Tool Reviews

Related Articles

Free Newsletter

Stay ahead with AI dev tools

Weekly insights on AI code review, static analysis, and developer productivity. No spam, unsubscribe anytime.

Join developers getting weekly AI tool insights.

Related Articles

Best AI Code Review Tools for Pull Requests in 2026

10 best AI PR review tools compared. Features, pricing, and real-world performance for CodeRabbit, Qodo, GitHub Copilot, and more.

March 13, 2026

best-ofBest AI Test Generation Tools in 2026: Complete Guide

Compare 9 AI test generation tools for unit, integration, and E2E testing. Features, pricing, language support, and IDE integrations reviewed.

March 13, 2026

best-ofI Reviewed 32 SAST Tools - Here Are the Ones Actually Worth Using (2026)

Tested 32 SAST tools across enterprise, open-source, and AI-native options - ranked by real vulnerability detection and false positive rates.

March 13, 2026

Sourcery Review

Sourcery Review

CodeRabbit Review

CodeRabbit Review

SonarQube Review

SonarQube Review

DeepSource Review

DeepSource Review

Semgrep Review

Semgrep Review

Snyk Code Review

Snyk Code Review

Codacy Review

Codacy Review

PR-Agent Review

PR-Agent Review

Qodo Review

Qodo Review

Qlty Review

Qlty Review