Qodo vs Cody (Sourcegraph): AI Code Review Compared (2026)

Qodo vs Cody compared on code review, codebase context, test generation, pricing, and enterprise fit. Which AI coding tool wins in 2026?

Published:

Last Updated:

Quick Verdict

Qodo and Sourcegraph Cody are both AI tools for software teams, but they solve fundamentally different problems. Qodo is a code quality platform - it reviews pull requests automatically, finds bugs through a multi-agent architecture, and generates tests to fill coverage gaps without being asked. Cody is a codebase-aware AI coding assistant - it understands your entire repository and helps developers navigate, generate, and understand code through conversation and inline completions.

Choose Qodo if: your team needs automated PR review that runs on every pull request without prompting, you want proactive test generation that closes coverage gaps systematically, you work on GitLab or Azure DevOps alongside GitHub, or the open-source transparency of PR-Agent matters to your organization.

Choose Cody if: your team needs an AI assistant that understands your entire codebase and can answer questions about it, you want smarter code completions informed by your repository’s patterns, you value Bring Your Own Key (BYOK) model flexibility, or developer productivity during coding is the primary metric.

The key difference in practice: Qodo is a gatekeeper that improves code quality at review time - it runs automatically and produces structured findings without developer prompting. Cody is a collaborator that accelerates coding during development - it responds to developer queries and generates code with full awareness of your existing codebase. These tools are more complementary than competitive.

Why This Comparison Matters

Qodo and Cody surface in the same evaluation shortlists when development teams search for “AI tools for code quality” or “AI coding assistant for large codebases.” The tools look adjacent from the outside - both use AI, both analyze code, both integrate into the IDE - but the comparison dissolves quickly once you examine what each tool actually does moment-to-moment in a developer’s workflow.

Qodo began as CodiumAI in 2022 with test generation as its founding purpose. The platform evolved into a full code quality system, and the February 2026 release of Qodo 2.0 introduced a multi-agent review architecture that outperformed seven other AI code review tools in benchmark testing with a 60.1% F1 score. Qodo earned recognition as a Visionary in the Gartner Magic Quadrant for AI Code Assistants 2025 and has raised $40 million in Series A funding.

Cody is Sourcegraph’s AI coding assistant, built on top of Sourcegraph’s code intelligence and search infrastructure - a platform that has indexed billions of lines of code for enterprise teams since 2013. Cody’s core differentiator is context: where most AI coding assistants are limited to the open file or a small context window, Cody retrieves relevant code from across your entire repository - or across all repositories in your organization - to inform its responses. This makes Cody distinctively useful for navigating large codebases.

Both tools are mature, backed by serious funding, and serve genuine enterprise use cases. The comparison is not about which tool is better overall - it is about which workflow problem each tool solves, and which one your team needs solved.

For related context, see our Qodo vs GitHub Copilot comparison, our best AI code review tools roundup, and the state of AI code review in 2026.

At-a-Glance Comparison

| Dimension | Qodo | Sourcegraph Cody |

|---|---|---|

| Primary focus | AI PR code review + test generation | Codebase-aware AI coding assistant |

| Code completion | No | Yes - core feature, all plans |

| Automated PR review | Yes - multi-agent, runs on every PR | No - chat-based review only |

| Test generation | Yes - proactive, coverage-gap detection | Yes - on request, codebase-aware |

| Codebase context | Multi-repo PR intelligence (Enterprise) | Full repository indexing (all plans) |

| Review benchmark | 60.1% F1 score (highest among 8 tested) | Not independently benchmarked |

| Context scope | Cross-repo PR impact analysis | All repos, full codebase semantic search |

| Git platforms | GitHub, GitLab, Bitbucket, Azure DevOps | GitHub, GitLab, Bitbucket, Gerrit |

| IDE support | VS Code, JetBrains | VS Code, JetBrains, Neovim, Emacs |

| BYOK / model flexibility | Multiple models via credits | BYOK for Claude, GPT-4, Gemini |

| Open-source components | PR-Agent (review engine) | Cody clients (Apache 2.0) |

| On-premise deployment | Yes (Enterprise) | Yes (Enterprise, self-hosted Sourcegraph) |

| Air-gapped deployment | Yes (Enterprise) | Varies by configuration |

| Zero data retention | Teams and Enterprise plans | Enterprise plan |

| Free tier | 30 PR reviews + 250 IDE/CLI credits/month | 200 autocomplete/day + limited chat |

| Paid starting price | $30/user/month (Teams) | $9/user/month (Pro) |

| Enterprise price | Custom | Custom |

| Language support | 10+ major languages | All major languages |

| Gartner recognition | Visionary (AI Code Assistants 2025) | Not listed |

| Code search | No | Yes - Sourcegraph code search included |

What Is Qodo?

Qodo (formerly CodiumAI) is an AI-powered code quality platform centered on two capabilities that work together: automated PR review and proactive test generation. Founded in 2022 and rebranded as the platform expanded beyond its test-generation origins, Qodo raised $40 million in Series A funding and earned Gartner Visionary recognition in 2025.

The platform has four components: a Git plugin for automated PR review across GitHub, GitLab, Bitbucket, and Azure DevOps; an IDE plugin for VS Code and JetBrains that brings shift-left review and test generation into the development environment; a CLI plugin for terminal-based quality workflows; and an Enterprise-tier context engine for multi-repo intelligence.

The February 2026 Qodo 2.0 release replaced a single-model approach with a multi-agent review architecture. Specialized agents collaborate simultaneously on bug detection, code quality analysis, security pattern identification, and test coverage gap detection. The combined output produces line-level review comments, a PR walkthrough, a risk assessment, and - where coverage gaps exist - generated tests ready to commit. In benchmark testing across eight AI code review tools, this architecture achieved the highest F1 score of 60.1% with a 56.7% recall rate.

Qodo’s open-source PR-Agent foundation gives it a meaningful transparency advantage over fully proprietary tools. Teams can inspect the review logic, deploy in self-hosted environments, and benefit from community contributions.

For a full feature breakdown, see the Qodo tool review.

What Is Sourcegraph Cody?

Sourcegraph Cody is an AI coding assistant built on Sourcegraph’s code intelligence platform - a system that has indexed and made searchable billions of lines of enterprise code since 2013. Cody’s defining characteristic is what Sourcegraph calls “context retrieval at scale”: the ability to pull relevant code, patterns, and definitions from across your entire codebase - not just the current file - when generating completions or responding to chat queries.

The platform covers inline code completion (available across VS Code, JetBrains, Neovim, and Emacs), an AI chat interface for code questions and generation, code navigation powered by Sourcegraph’s language-aware indexing, and an Enterprise tier that extends all capabilities across all repositories in an organization with SSO, SAML, and self-hosted deployment.

Cody’s model flexibility sets it apart from tools tied to a single provider. The Free and Pro tiers offer access to Claude, GPT-4, and Gemini models. Enterprise customers can use Bring Your Own Key (BYOK) to route inference calls through their own API keys and, in some configurations, their own model endpoints. This makes Cody uniquely compatible with organizations that have existing LLM vendor relationships or procurement preferences.

For teams on the Sourcegraph platform already, Cody integrates directly with Sourcegraph’s code search - developers can move fluidly between searching the codebase and asking Cody to explain or extend what they find.

For a full feature breakdown, see the Sourcegraph Cody tool review.

Feature-by-Feature Breakdown

Code Review - Automated vs Conversational

Code review is where the two tools diverge most fundamentally in approach, not just in depth.

Qodo’s automated PR review runs without any developer action after initial setup. Every pull request receives a structured review: a PR walkthrough summarizing the change, line-level comments from specialized agents covering bugs, quality issues, and security patterns, a test coverage gap analysis, and a risk level assessment. The multi-agent architecture runs these dimensions in parallel - a bug detection agent, a code quality agent, a security agent, and a test coverage agent each contribute to the final output simultaneously.

In benchmark testing across eight AI code review tools, Qodo 2.0 achieved the highest F1 score of 60.1% - meaning it finds a higher proportion of real bugs with competitive precision compared to every other tool tested. This benchmark matters because Qodo’s entire business is built around maximizing review accuracy, and the multi-agent investment is concentrated entirely on that goal.

Cody’s code review capability is conversational. There is no automated workflow that runs on every PR. Instead, developers can paste code into Cody’s chat interface, describe what they want reviewed, and receive an AI response informed by Cody’s understanding of the surrounding codebase. Cody can identify bugs, suggest improvements, and explain potential issues - and because it has full codebase context, it can make observations that file-scoped tools miss, such as inconsistencies with patterns used elsewhere in the repository.

This conversational approach requires developer initiative. Cody will not automatically post a comment on your pull request or generate a test for an uncovered branch. It responds to prompts. For teams that want systematic, zero-friction review on every PR, this is a significant limitation. For teams that want a smart collaborator available on demand for targeted review questions, Cody’s approach is more flexible.

The practical implication: Qodo operates as a quality gate - it runs automatically and produces consistent findings without depending on developer discipline. Cody operates as a knowledgeable collaborator - it produces deeper, more codebase-aware answers when asked, but requires asking. For organizations evaluating both, the right framing is not “which one reviews code better” but “which review model fits our team’s workflow.”

Codebase Context and Intelligence

Codebase context is where Cody holds a meaningful architectural advantage at lower price points.

Cody’s context retrieval is built on Sourcegraph’s code graph infrastructure - the same technology that powers enterprise code search for organizations with thousands of repositories. When you ask Cody a question, it performs a semantic search across your indexed repositories to retrieve the most relevant context: function definitions, usage patterns, related tests, documentation, and architectural conventions. This retrieval happens across all repositories in your organization on the Enterprise plan.

In practice, this means a developer onboarding into a large codebase can ask Cody “how does this service handle authentication?” and receive a synthesized answer drawn from the actual authentication code across multiple files and services - not a generic answer from training data. A developer making a change to a shared library can ask “what other services call this function?” and get a precise list with examples. This kind of codebase intelligence is what differentiates Cody from tools that are effectively sophisticated autocomplete engines.

Qodo’s context engine (available on the Enterprise plan) focuses specifically on multi-repo PR intelligence. It analyzes PR history across repositories, learns from past review patterns and team feedback, and understands cross-service dependencies for the purpose of deeper review accuracy. If a change to a shared API in one repository could break consumers in three downstream services, Qodo’s context engine can surface that risk during PR review.

The two context systems serve different purposes and are available at different price points. Cody’s full repository indexing is available from the Pro plan at $9/user/month. Qodo’s cross-repo context engine requires the Enterprise plan. For teams that want broad codebase intelligence without an enterprise contract, Cody is the more accessible option. For teams that specifically need cross-repo impact analysis for review depth, Qodo’s Enterprise context engine is purpose-built for that workflow.

Test Generation - Proactive vs On-Demand

Test generation is a capability both tools offer, but the approach - and therefore the outcome - differs substantially.

Qodo’s test generation is proactive and automated. During PR review, Qodo’s test coverage agent identifies code paths in changed files that lack test coverage and generates unit tests to fill those gaps - without the developer requesting it. The tests appear alongside other review findings, in the project’s testing framework (Jest, pytest, JUnit, Vitest, and others), with assertions that exercise specific behaviors rather than placeholder stubs. In the IDE, the /test command generates complete test suites for selected code, analyzing behavior, edge cases, and error conditions systematically.

This proactive posture creates a feedback loop: Qodo identifies a bug in a PR, then generates a test that would have caught that bug before the next regression. The review finding and the preventive test are produced together. Users consistently report that Qodo generates tests for edge cases they would not have written independently, and occasionally uncovers bugs during the test generation process.

Cody’s test generation is on-demand and codebase-aware. Developers ask Cody to write tests through the chat interface or inline prompts, and Cody generates tests informed by the full repository context - matching the testing framework, patterns, and conventions already in use. Because Cody understands how your team writes tests (from indexing existing test files), its generated tests fit the codebase more naturally than tests from tools without codebase awareness.

For teams with an established testing culture who want on-demand, convention-aware test generation as part of coding, Cody’s approach is natural and well-integrated. For teams trying to systematically close test coverage debt or enforce coverage standards on every PR, Qodo’s automated gap detection produces more consistent outcomes without relying on developer initiative.

For a broader discussion of automated testing tools, see our how to automate code review guide.

Code Completion and IDE Assistance

Code completion is Cody’s domain. Qodo does not offer it.

Cody’s inline completion is available across VS Code, JetBrains, Neovim, and Emacs. Suggestions appear as you type and can be accepted with Tab - the same UX established by GitHub Copilot. What distinguishes Cody’s completion is the retrieval-augmented context: when generating a suggestion, Cody retrieves relevant code from across your repositories to produce completions that reference existing patterns, utility functions, and conventions in your codebase rather than defaulting to generic approaches.

The Free plan limits completions to 200 per day. The Pro plan at $9/user/month provides unlimited completions with access to Claude, GPT-4, and Gemini. Enterprise customers can configure BYOK to route completions through their own model access agreements. For teams evaluating Cody against GitHub Copilot primarily on completion quality, the codebase-aware retrieval is Cody’s primary differentiator - particularly for large, complex codebases where “write this the way our team does it” matters.

Qodo’s IDE plugin for VS Code and JetBrains focuses on review and test generation, not completion. The plugin brings shift-left quality work into the development environment: reviewing code before committing, running the /test command to generate tests locally, and getting quality improvement suggestions on selected code. The plugin supports multiple AI models including GPT-4o, Claude 3.5 Sonnet, and DeepSeek-R1, with Local LLM support through Ollama for fully offline operation.

Qodo does not generate inline code suggestions as you type. It is a quality tool that lives in the IDE, not a coding accelerator. Teams that want both automated quality gates and AI-assisted writing must use a separate completion tool alongside Qodo.

Model Flexibility and BYOK

Model flexibility is an increasingly important dimension for enterprise procurement, and both tools handle it differently.

Cody’s model flexibility is a genuine selling point. The Free and Pro plans provide access to Claude 3.5 Sonnet, GPT-4o, and Gemini Pro. Enterprise customers can use BYOK - routing inference through their own Anthropic, OpenAI, or Google API keys. This means organizations with existing LLM vendor contracts can apply those contracts to Cody without paying twice. In some configurations, teams can also deploy open-weight models on their own infrastructure to satisfy data residency requirements.

This flexibility matters for procurement teams negotiating consolidated AI vendor agreements and for organizations where AI spend is scrutinized at the model-provider level. No other mainstream AI coding assistant offers the same breadth of BYOK support.

Qodo’s model support covers GPT-4o, Claude 3.5 Sonnet, Gemini 2.0 Flash, DeepSeek-R1, and o3-mini, with Local LLM support through Ollama. In the Teams and Enterprise plans, Qodo selects appropriate models for each review task automatically. Premium models like Claude Opus cost additional credits per request. Qodo does not offer BYOK in the same way as Cody - teams use the models Qodo supports through the Qodo platform rather than routing through their own API keys.

For organizations with strong LLM vendor preferences or existing contracts, Cody’s BYOK model is a meaningful procurement advantage.

Enterprise Deployment and Privacy

Both tools support enterprise deployment with strong privacy controls, but with different maturity profiles and architectural approaches.

Cody Enterprise leverages Sourcegraph’s years of enterprise deployment experience. Self-hosted Sourcegraph deployments can run entirely within an organization’s own infrastructure, with the Cody AI assistant accessing the self-hosted code intelligence backend. BYOK routes LLM inference through the organization’s own API keys. SSO and SAML are standard. For organizations already running self-hosted Sourcegraph, adding Cody Enterprise is an incremental deployment, not a net-new infrastructure footprint.

Qodo Enterprise offers on-premises and air-gapped deployment through the full Qodo platform and the open-source PR-Agent foundation. Teams can inspect the review logic (via PR-Agent), deploy in environments with no internet connectivity, and benefit from SSO, enterprise dashboards, and a 2-business-day SLA. The open-source PR-Agent foundation is a unique transparency advantage - no other commercial AI code review tool allows this level of inspection and auditability.

For teams in regulated industries, both tools address deployment requirements. The key distinction is toolchain alignment: if your organization runs Sourcegraph already, Cody Enterprise extends naturally from your existing infrastructure investment. If your organization needs the deepest available code review quality in an air-gapped environment, Qodo Enterprise built on PR-Agent is purpose-built for that requirement.

See our AI code review in enterprise environments guide for broader deployment considerations.

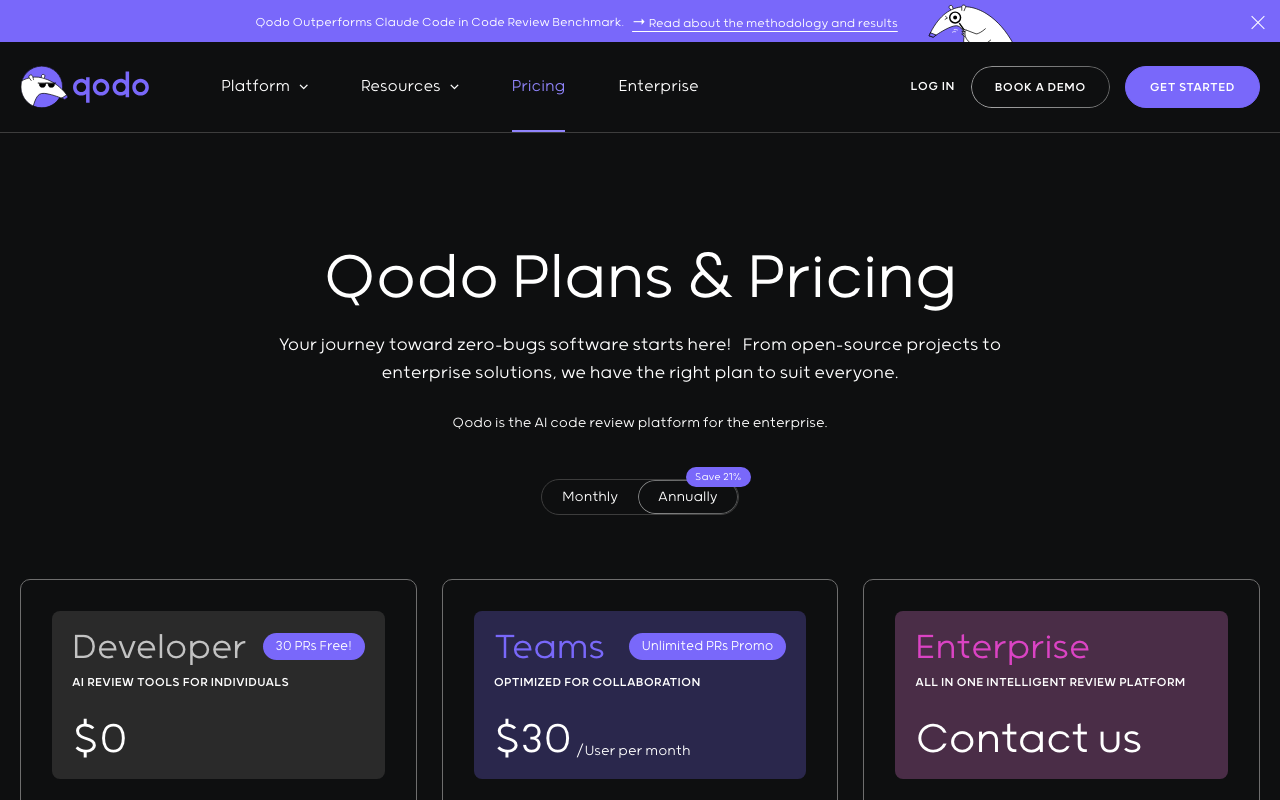

Pricing Comparison

Qodo Pricing

| Plan | Price | Key Capabilities |

|---|---|---|

| Developer (Free) | $0 | 30 PR reviews/month, 250 IDE/CLI credits/month, community support |

| Teams | $30/user/month | Unlimited PR reviews (current promotion), 2,500 credits/user/month, no data retention, private support |

| Enterprise | Custom | Context engine, multi-repo intelligence, on-premises/air-gapped deployment, SSO, 2-business-day SLA |

The Qodo credit system applies to IDE and CLI interactions. Standard operations cost 1 credit. Premium models cost more - Claude Opus costs 5 credits per request. Credits reset on a 30-day rolling schedule from first use. The Teams plan’s unlimited PR review offering is a current promotion; the standard allowance is 20 PRs per user per month, so confirm current terms before committing to annual pricing.

Sourcegraph Cody Pricing

| Plan | Price | Key Capabilities |

|---|---|---|

| Free | $0 | 200 autocomplete suggestions/day, limited chat queries, VS Code and JetBrains |

| Pro | $9/user/month | Unlimited autocomplete and chat, Claude, GPT-4o, and Gemini access, all IDEs |

| Enterprise | Custom | BYOK, self-hosted Sourcegraph, SSO/SAML, all-repo context, admin controls |

Cody’s pricing structure is straightforward. The Free tier is genuinely useful for evaluation but limited at 200 completions per day. The Pro tier at $9/user/month removes all usage limits and provides multi-model access. Enterprise pricing is custom and typically includes the full Sourcegraph platform licensing rather than Cody as a standalone product.

Side-by-Side Cost at Scale

| Team Size | Qodo Teams (Annual) | Cody Pro (Annual) | Cody Pro + Qodo Teams |

|---|---|---|---|

| 5 developers | $1,800/year | $540/year | $2,340/year |

| 10 developers | $3,600/year | $1,080/year | $4,680/year |

| 25 developers | $9,000/year | $2,700/year | $11,700/year |

| 50 developers | $18,000/year | $5,400/year | $23,400/year |

For teams that only need code completion and codebase-aware AI assistance, Cody Pro at $9/user/month is significantly cheaper than Qodo Teams at $30/user/month. For teams that need both capabilities - AI assistance during coding and automated review at PR time - combining both tools at $39/user/month provides coverage across the full development workflow.

For pricing context on related tools, see our GitHub Copilot pricing guide and CodeRabbit pricing guide.

Use Cases - When to Choose Each Tool

When Qodo Makes More Sense

Teams with low test coverage who want to close the gap systematically. Qodo’s proactive test generation finds coverage gaps and generates tests automatically during PR review - not in response to developer prompts. For teams with accumulated test debt and no realistic path to covering it through manual effort, Qodo provides a mechanism that compounds over time: every PR reviewed is also an opportunity to improve test coverage on the code that changed.

Organizations on GitLab, Bitbucket, or Azure DevOps that want automated AI code review. Qodo’s four-platform Git support (plus CodeCommit and Gitea through PR-Agent) makes it one of the very few dedicated AI code review tools that work outside GitHub. Cody’s code search and context retrieval connect to GitHub, GitLab, and Bitbucket, but Cody does not provide automated PR review on any platform. For Azure DevOps teams specifically, Qodo is one of the strongest available options for systematic automated review.

Teams that need benchmark-validated review accuracy. If catching bugs before production is the primary metric, Qodo’s 60.1% F1 score represents the current measured state of the art in AI code review. Cody can answer code review questions well, but it has not been independently benchmarked on PR review accuracy and does not run automatically on every PR.

Teams with open-source transparency requirements. PR-Agent is publicly available, inspectable, and community-contributed. Organizations that need to audit what their AI review tool does with their code - or that operate in environments requiring open-source review chains - have an option with Qodo that no other commercial review tool provides.

When Cody Makes More Sense

Large engineering organizations with complex, multi-repository codebases. Cody’s full-codebase indexing is most valuable when the codebase is large enough that no single developer can hold it all in working memory. When developers routinely ask “how does X work?” or “where is this pattern used?”, and the answer spans multiple files and services, Cody’s retrieval-augmented responses reduce the cognitive load of navigating the codebase dramatically.

Teams where developer onboarding speed is a key metric. New developers joining a large codebase can ask Cody questions that would otherwise require bothering senior engineers or spending hours reading code. “What’s the standard way to add a new API endpoint in this service?”, “Which libraries does this team use for logging?”, and “How do we handle database migrations?” become answered questions rather than coordination overhead.

Organizations with existing LLM vendor contracts. Cody’s BYOK support lets organizations route inference through their own Anthropic, OpenAI, or Google agreements. If your organization has already negotiated enterprise pricing with one of these providers, Cody can apply that contract to your AI coding toolchain without adding a new vendor relationship.

Teams that want code completion with strong codebase awareness. Cody’s retrieval-augmented completions are distinctively useful in large codebases where “write this the way the team writes it” is more valuable than “write this in a generally correct way.” For teams already using Sourcegraph for code search, Cody integrates naturally into an established workflow.

The Codebase Context Difference in Practice

Cody’s retrieval-augmented context deserves concrete illustration because it represents a qualitative difference in how the tool helps, not just a benchmark number.

Consider a developer joining a team that maintains a payments microservice architecture across eight repositories. On their first week, they are asked to add a new payment method to the checkout service.

With a context-window-limited AI assistant, they can ask about the file they have open - getting help with syntax, basic patterns, and generic payment processing logic. Understanding how the checkout service relates to the validation service, the fraud detection service, and the notification service requires reading code manually or asking colleagues.

With Cody indexed across all eight repositories, the developer can ask: “How does the existing payment method integration in checkout-service connect to fraud-detection-service?” and receive a synthesized answer drawn from the actual integration code in both services. They can ask “What validation does payment-validation-service apply to new payment types?” and get the specific validation logic with references to the relevant files. The onboarding that would take days of code reading compresses into hours of targeted Cody conversations.

Qodo would not address these questions. Qodo reviews the PR when the developer opens it - finding bugs in the implementation, identifying uncovered branches, and generating tests. The review quality is high, but it operates at the end of the development process, not during it.

Neither tool replaces the other in this scenario. Cody accelerates the development phase; Qodo improves the review phase.

Security Considerations

Qodo’s security approach focuses on identifying security vulnerabilities during automated code review. The multi-agent architecture includes a dedicated security agent that detects common vulnerability patterns - SQL injection, XSS vectors, insecure deserialization, and authentication logic errors - in PR diffs. Custom review instructions can enforce organization-specific security rules. For deeper vulnerability scanning beyond what AI review catches, pairing Qodo with dedicated SAST tools like Semgrep or Snyk Code is the recommended architecture.

Cody’s security approach is primarily about securing the AI tool within your workflow rather than scanning for vulnerabilities in your code. BYOK ensures LLM inference goes through your own API keys and vendor agreements. Self-hosted Sourcegraph deployment keeps code intelligence infrastructure inside your perimeter. Data retention controls address privacy concerns for regulated industries. Cody can answer security-related questions and identify patterns that look insecure when asked, but it does not run automated security analysis as a structured workflow.

For teams in security-sensitive environments, the two tools address different threat models: Cody secures the AI tool’s access to your code, Qodo uses AI to find security bugs in your code. See our AI code review for security guide for a deeper treatment.

Alternatives to Consider

Neither Qodo nor Cody is the right answer for every team. Several alternatives are worth evaluating alongside them.

CodeRabbit is the most widely deployed dedicated AI code review tool, with over 2 million connected repositories. It combines AST-based analysis with AI reasoning and includes 40+ built-in deterministic linters. At $12-24/user/month, it is less expensive than Qodo Teams and focuses exclusively on review quality. It does not offer test generation or codebase-wide context retrieval. See our CodeRabbit vs Qodo comparison for a detailed breakdown.

CodeAnt AI is an emerging alternative at $24-40/user/month that combines AI code review with security scanning and code quality metrics in a single platform. For teams that want review and security analysis consolidated without separate SAST tooling, CodeAnt AI is worth evaluating as a mid-market option between CodeRabbit and Qodo.

GitHub Copilot provides code completion, AI chat, code review, and an autonomous coding agent at $19/user/month for Business. For teams on GitHub without strict data sovereignty requirements, Copilot is the broadest single-subscription option. See our Qodo vs GitHub Copilot comparison and our GitHub Copilot vs Tabnine comparison for detailed matchups.

Tabnine is the strongest alternative for privacy-first code completion, with air-gapped on-premise deployment and IP indemnification available on the Enterprise plan at $39/user/month. For teams that need Cody-style completion with the strongest available data sovereignty guarantees, Tabnine’s deployment flexibility is unmatched. See our Qodo vs Tabnine comparison for a full breakdown.

Greptile indexes your entire codebase and uses full-codebase context for PR review - combining Cody’s context retrieval strength with Qodo’s automated review posture. Greptile achieved an 82% bug catch rate in independent benchmarks. It supports only GitHub and GitLab, has no free tier, and does not generate tests. For teams on GitHub or GitLab that want the deepest possible automated review with whole-codebase context, Greptile is worth evaluating. See our CodeRabbit vs Greptile comparison for related context.

For a comprehensive market view, see our best AI code review tools roundup and best AI tools for developers guide.

Verdict - Which Should You Choose?

The Qodo vs Cody comparison resolves clearly once you identify which workflow problem your team needs solved most urgently.

Choose Qodo if your primary need is a systematic, automated PR review workflow that catches bugs and generates tests without requiring developer action on every PR. Qodo is a quality gate - it runs on its own, produces consistent findings, and improves what ships. Its 60.1% F1 benchmark score represents the current measured state of the art in AI code review, and its proactive test generation is unique in the market. Qodo works across GitHub, GitLab, Bitbucket, and Azure DevOps, making it one of the few serious options for teams not standardized on GitHub.

Choose Cody if your primary need is a codebase-aware AI assistant that helps developers navigate, understand, and write code faster. Cody is a development accelerator - it makes developers more effective during the coding phase by giving them access to the full context of your codebase. Its retrieval-augmented completions and chat responses are distinctively useful in large, complex codebases where context-window-limited tools fall short. At $9/user/month for the Pro plan, it is among the most affordable ways to get genuine codebase-aware AI assistance.

Run both if your team has budget for complete workflow coverage. Cody at $9/user/month handles the development phase - completions, chat, codebase navigation, on-demand code generation. Qodo at $30/user/month handles the review phase - automated PR review, test generation, quality gates. The combined $39/user/month covers both workflow stages without overlap, since the tools operate at different points in the development lifecycle and do not duplicate each other’s primary capabilities.

Practical recommendations by team profile:

-

Solo developers and small teams on a budget: Start with Cody Pro at $9/user/month for codebase-aware completion and chat. Add Qodo’s free tier (30 PR reviews/month) to evaluate whether automated review delivers enough value to justify the Teams upgrade.

-

Teams of 5-25 on GitHub focused on code quality and test coverage: Qodo Teams at $30/user/month delivers the highest benchmark-validated review accuracy and proactive test generation. Add Cody Pro at $9/user/month if codebase-aware completion and navigation are also priorities.

-

Teams on GitLab or Azure DevOps that want automated AI review: Qodo is one of the only dedicated options with serious multi-platform support. Cody’s PR review is conversational only, making Qodo the stronger systematic review choice for non-GitHub platforms.

-

Large engineering organizations with complex multi-repo codebases: Cody Enterprise’s full-organization codebase indexing is most impactful at this scale. Pair with Qodo Enterprise for systematic PR review quality across all repositories. The combination addresses both developer productivity during coding and quality enforcement at review time.

-

Regulated industries with data sovereignty requirements: Both tools offer enterprise deployment options. Evaluate Cody Enterprise’s self-hosted Sourcegraph model alongside Qodo Enterprise’s PR-Agent-based air-gapped deployment and request detailed infrastructure documentation from each vendor before committing.

The bottom line: Qodo and Cody are not competing for the same workflow slot. Qodo is the right investment when the goal is improving the quality of what ships. Cody is the right investment when the goal is improving the speed and effectiveness of how developers build. For teams that care about both, the tools are designed to complement each other.

Frequently Asked Questions

Is Qodo better than Cody for code review?

For dedicated, automated PR code review, Qodo is the stronger tool. Its multi-agent architecture in Qodo 2.0 achieved the highest F1 score (60.1%) among eight tested AI code review tools. Cody does not offer automated PR review as a structured workflow - it is a conversational AI assistant and code completion tool that developers query manually. Cody's advantage over Qodo is its ability to index and reason over your entire codebase, making it excellent for exploratory questions, understanding unfamiliar code, and targeted chat-based review help. If your team needs systematic, automated PR review that runs without prompting, Qodo is the right choice. If your team needs a smart coding assistant that understands your whole repository, Cody fills that gap.

Does Cody generate tests automatically?

Cody can generate tests when asked through its chat interface, leveraging its codebase context to produce tests aligned with existing patterns and frameworks in your repository. However, it does not proactively scan PRs for coverage gaps and generate tests without prompting. Qodo's test generation is proactive - during PR review, Qodo automatically identifies untested code paths and generates unit tests to fill those gaps without any developer request. For teams trying to systematically close test coverage debt, Qodo's autonomous approach produces more consistent outcomes. For developers who want on-demand test generation with deep awareness of their codebase's conventions, Cody's chat-driven approach with full repository context is a capable alternative.

What is Sourcegraph Cody and how does it differ from regular AI coding assistants?

Cody is Sourcegraph's AI coding assistant, built on top of Sourcegraph's code intelligence and search platform. What distinguishes Cody from tools like GitHub Copilot or Tabnine is its context retrieval architecture - Cody indexes your entire codebase (including all repositories in your organization) and retrieves relevant context from across your codebase to answer questions and generate code. Rather than relying solely on the open file or a fixed window of surrounding code, Cody can pull definitions, usages, patterns, and related files from anywhere in your repositories. This makes it particularly powerful for navigating large, complex codebases and for understanding how a change in one place affects other parts of the system. Cody also supports Bring Your Own Key (BYOK) and multiple LLM providers including Claude, GPT-4, and Gemini.

How much does Cody cost compared to Qodo?

Cody's Free plan supports individual developers with 200 autocomplete suggestions per day and limited chat queries. The Pro plan costs $9/user/month with unlimited autocomplete and chat. The Enterprise plan is custom-priced and includes single-tenant deployment, SSO, SAML, and access to the full Sourcegraph platform including code search. Qodo's free Developer plan includes 30 PR reviews and 250 IDE/CLI credits per month. The Teams plan costs $30/user/month with unlimited PR reviews (current promotion) and 2,500 monthly credits. Enterprise is custom-priced with on-premises and air-gapped deployment, context engine, and SSO. For individual developers, Cody Pro at $9/user/month is significantly cheaper than Qodo Teams at $30/user/month. For enterprise teams that need both codebase-aware AI assistance and deep automated PR review, running both tools at their respective price points may be justified.

Does Cody support on-premise deployment?

Yes. Cody Enterprise supports self-hosted Sourcegraph deployment, which means the Sourcegraph backend - including the code intelligence and indexing infrastructure - can run entirely within your own infrastructure. This addresses data sovereignty requirements for regulated industries. Cody also supports BYOK (Bring Your Own Key), so LLM inference calls can go through your own API keys and, in some configurations, your own model endpoints. Qodo also offers on-premises and air-gapped deployment on its Enterprise plan through the full Qodo platform and its open-source PR-Agent foundation. Both tools provide enterprise deployment options, but the maturity and specifics differ. Teams with stringent air-gapped requirements should evaluate both and request detailed infrastructure documentation from each vendor.

Which tool has better codebase context awareness - Qodo or Cody?

Cody is the clear leader for broad, repository-spanning codebase context. Its entire architecture is built on Sourcegraph's code intelligence platform, which indexes all repositories in your organization and uses semantic search to retrieve relevant context from anywhere in your codebase when answering questions or generating code. Qodo's context engine (Enterprise plan only) focuses specifically on multi-repo PR intelligence - understanding cross-service dependencies and how changes in one repository affect others for the purpose of deeper review. Cody's context retrieval is broader and available at lower price points. Qodo's context is narrower but purpose-built for review accuracy. For general codebase exploration, onboarding, and understanding large systems, Cody's context awareness is more immediately useful. For detecting cross-repo PR impacts, Qodo's Enterprise context engine is more specialized.

Can I use Qodo and Cody together?

Yes, and the combination is complementary rather than redundant. Cody handles code completion, codebase-aware chat, and on-demand code generation with full repository context - capabilities Qodo does not provide. Qodo handles automated PR review with multi-agent accuracy and proactive test generation - capabilities Cody does not provide as structured, automated workflows. The tools operate at different workflow stages: Cody assists while writing code, Qodo audits code at review time. The combined cost would be Cody Pro at $9/user/month plus Qodo Teams at $30/user/month, totaling $39/user/month. For teams that want deep codebase assistance during development and rigorous automated review at PR time, this combination covers both workflow stages without overlap.

Does Cody offer code completion like GitHub Copilot?

Yes. Cody provides inline code completion across VS Code, JetBrains, Neovim, and Emacs. Like GitHub Copilot, completions appear as you type and can be accepted with Tab. What distinguishes Cody's completion from Copilot's is the codebase context retrieval - Cody can draw on patterns and conventions from across your entire repository when generating suggestions, rather than relying primarily on the current file and recent context. In practice, this means Cody completions in a large microservice codebase can reference patterns from other services, existing utility functions, and established conventions in a way that context-window-limited tools cannot. The free plan limits completions to 200 per day. The Pro plan offers unlimited completions at $9/user/month.

Which tool works better for large enterprise codebases?

The answer depends on what capability matters most for your enterprise team. For large codebases where developer productivity suffers from navigating unfamiliar code and understanding system-wide dependencies, Cody Enterprise's full codebase indexing is transformative - developers can ask 'where is this function called?', 'what pattern does the team use for database transactions?', or 'what other services depend on this API?' and get accurate answers drawn from across the entire repository graph. For large codebases where PR review quality is the bottleneck and test coverage is a concern, Qodo Enterprise's multi-agent review and cross-repo impact analysis address those specific pain points. Large enterprises evaluating both tools should consider deploying Cody for developer assistance and Qodo for automated quality gates - they address distinct parts of the software delivery lifecycle.

Is Sourcegraph Cody open source?

Sourcegraph Cody's client components are open source and available on GitHub under the Apache 2.0 license. This includes the VS Code extension, JetBrains plugin, and other client-side code. The Sourcegraph backend platform that powers Cody's codebase indexing and context retrieval is available in a Community Edition for self-hosting but is proprietary in its full Enterprise form. Qodo's commercial platform is proprietary, but its core review engine is built on PR-Agent, which is fully open source on GitHub. Both tools have meaningful open-source components - Cody at the client layer, Qodo at the review engine layer - but neither is fully open source end-to-end.

What alternatives should I consider besides Qodo and Cody?

If you need dedicated AI PR review without Qodo's price point, CodeRabbit at $12-24/user/month is the most widely deployed option with AST-based analysis and 40+ built-in linters. CodeAnt AI is an emerging alternative at $24-40/user/month, combining AI code review with security scanning and code quality metrics in a single platform. For code completion with privacy controls similar to Cody's BYOK model, Tabnine Enterprise offers on-premise and air-gapped deployment at $39/user/month. GitHub Copilot at $19/user/month provides completion, chat, and code review in one subscription for teams on GitHub without strict data sovereignty requirements. For the deepest codebase context retrieval in a review context, Greptile indexes your entire codebase and uses that context for PR review, achieving an 82% bug catch rate in independent benchmarks.

What is the verdict - should I choose Qodo or Cody?

Choose Qodo if your team's primary need is systematic, automated PR code review with benchmark-validated accuracy, proactive test generation that closes coverage gaps without developer prompting, and broad Git platform support including Azure DevOps and GitLab. Qodo is a quality gate that improves what ships, not a development assistant that helps you write code faster. Choose Cody if your team's primary need is a context-aware AI coding assistant that understands your entire codebase - for faster navigation, smarter completions, on-demand code generation, and chat-based help that references your actual code patterns. Cody is a development accelerator, not a review gate. For teams that want both - systematic review quality and codebase-aware AI assistance - running Cody Pro ($9/user/month) alongside Qodo Teams ($30/user/month) is the most complete coverage of both workflow stages.

Explore More

Tool Reviews

Related Articles

- Best AI Tools for Developers in 2026 - Code Review, Generation, and Testing

- 10 Best GitHub Copilot Alternatives for Code Review (2026)

- What Happened to CodiumAI? The Rebrand to Qodo Explained

- CodiumAI vs Codium (Open Source): They Are NOT the Same

- CodiumAI vs GitHub Copilot: Which AI Coding Assistant Should You Choose?

Free Newsletter

Stay ahead with AI dev tools

Weekly insights on AI code review, static analysis, and developer productivity. No spam, unsubscribe anytime.

Join developers getting weekly AI tool insights.

Related Articles

Checkmarx vs Veracode: Enterprise SAST Platforms Compared in 2026

Checkmarx vs Veracode - enterprise SAST, DAST, SCA, Gartner positioning, pricing ($40K-250K+), compliance, and when to choose each AppSec platform.

March 13, 2026

comparisonCodacy Free vs Pro: Which Plan Do You Need in 2026?

Codacy Free vs Pro compared - features, limits, pricing, and when to upgrade. Find the right Codacy plan for your team size and workflow.

March 13, 2026

comparisonCodacy vs Checkmarx: Developer Code Quality vs Enterprise AppSec in 2026

Codacy vs Checkmarx - developer code quality vs enterprise AppSec, pricing ($15/user vs $40K+), SAST, DAST, SCA, compliance, and when to choose each.

March 13, 2026

Qodo Review

Qodo Review

Sourcegraph Cody Review

Sourcegraph Cody Review